Generative AI vs Machine Learning: Key Differences Explained

- BLOG

- Artificial Intelligence

- February 8, 2026

Generative AI vs machine learning becomes a real question the moment an AI system moves beyond a demo and into daily use. Should it write responses, or should it decide outcomes? Should variability be acceptable, or must results stay consistent every time? Those choices define whether a system scales cleanly or quietly breaks.

Most teams run into trouble because the differences are misunderstood early. Tools blur boundaries, terminology gets mixed, and expectations drift. The result is content systems forced to act like decision engines, or prediction models stretched into roles they were never designed to handle.

This article clears that confusion with practical clarity. You will see how each approach works, where they fundamentally differ, and how to choose the right one before design decisions become costly to undo.

Contents

- 1 What Is Machine Learning?

- 2 Build the right AI approach for your business.

- 3 What Is Generative AI?

- 4 Generative AI vs Machine Learning: The Core Conceptual Difference

- 4.1 Goal and primary output

- 4.2 What the system learns

- 4.3 Type of data each system handles best

- 4.4 How users interact with it

- 4.5 Degree of output predictability

- 4.6 Success criteria and evaluation

- 4.7 Integration into business workflows

- 4.8 Best fit in real operations

- 4.9 Typical role inside enterprise systems

- 5 Side by Side Comparison: Gen AI vs Machine Learning

- 6 When to Use Generative AI vs When to Use Machine Learning

- 7 Can Generative AI and Machine Learning Work Together?

- 8 Building the Right Generative AI and Machine Learning Strategy

- 8.1 We start with strategy, feasibility, and data readiness

- 8.2 We build custom models that match your business context

- 8.3 We take systems from prototype to enterprise deployment

- 8.4 We handle the full lifecycle, including monitoring and retraining

- 8.5 We design for governance, access control, and compliance from day one

- 9 Build the right AI approach for your business.

- 10 Conclusion

- 11 Frequently Asked Question

What Is Machine Learning?

Machine learning is a subset of artificial intelligence that focuses on enabling systems to learn from data rather than relying on explicitly programmed rules. Instead of being told exactly how to act in every scenario, a machine learning model identifies statistical patterns within historical data.

It then uses those learned patterns to make predictions or decisions when new data appears. This approach is most effective when outcomes need to be consistent, measurable, and grounded in evidence derived from large or continuously growing datasets.

Machine learning is commonly applied to problems involving prediction, classification, ranking, or scoring, where human-defined logic alone would struggle to capture the underlying complexity.

How Machine Learning Works

Machine learning systems follow a structured lifecycle that converts raw data into repeatable, production-ready predictions. The typical workflow includes the following steps:

- Problem framing: Teams define a specific and measurable goal, such as predicting customer churn or identifying fraudulent transactions. The quality of this framing directly affects model performance.

- Data collection and labeling: Historical data relevant to the objective is gathered. In supervised learning, each data point includes a known outcome so the model can learn correct associations.

- Feature construction: Raw data is transformed into structured inputs that represent meaningful signals. This step often has a greater impact on results than the choice of algorithm.

- Model selection: An algorithm is chosen based on data volume, complexity, latency requirements, and explainability constraints. Simpler models are often preferred in production settings.

- Training and optimization: The model adjusts its internal parameters to minimize error on training data while avoiding memorization of noise.

- Validation and testing: Performance is evaluated on unseen data to confirm that the model generalizes and to identify failure patterns.

- Deployment for inference: The trained model is integrated into applications where it produces predictions in real time or batch workflows.

- Monitoring and retraining: As real-world conditions change, model accuracy can decline. Continuous monitoring and periodic retraining are required to maintain reliability.

Key Benefits of Machine Learning

Machine learning offers practical advantages for organizations that rely on data-driven decision making at scale.

- Consistent, testable decision making: Outputs can be evaluated using defined metrics, making performance measurable and comparable over time.

- Strong performance on structured datasets: Transaction records, logs, metrics, and tabular business data align well with machine learning techniques.

- Scalability beyond human capacity: Models can analyze large volumes of data without sacrificing speed or consistency.

- Continuous improvement through retraining: Systems can adapt as new data reflects changes in behavior or operating conditions.

- Objective performance measurement: Accuracy, precision, recall, and error rates provide clear signals of model quality.

Common Machine Learning Use Cases in Production

Machine learning is used when decisions must be repeated at scale and supported by data rather than intuition. In production systems, models typically output scores, probabilities, or rankings that guide automated or human decisions.

Machine learning is used when decisions must be repeated at scale and supported by data rather than intuition. In production systems, models typically output scores, probabilities, or rankings that guide automated or human decisions.

Fraud detection and anomaly identification

Models learn normal transaction patterns and assign risk scores to new activities. This allows systems to flag unusual behavior without relying on static rules.

Recommendation and ranking systems

User interaction data is analyzed to estimate relevance, enabling personalized ranking of products, content, or actions in real time.

Demand and capacity forecasting

Historical data is used to model trends and seasonality, helping organizations plan inventory, staffing, and infrastructure more accurately.

Predictive maintenance

Sensor and operational data is analyzed to detect early signs of equipment degradation, reducing unplanned downtime.

Risk scoring and compliance evaluation

Entities are evaluated against learned risk patterns to support approvals, reviews, and regulatory workflows.

Limitations and Risks of Machine Learning

Machine learning improves decision making, but it introduces constraints that must be actively managed.

- Dependence on historical patterns: Models assume future behavior resembles past data, which may not hold during sudden changes.

- Bias from training data: Predictions reflect the distributions present in historical data, including existing imbalances.

- Sensitivity to data quality: Incomplete or inconsistent inputs directly affect model accuracy.

- Model drift over time: Changes in behavior or conditions can reduce performance without ongoing monitoring.

- Limited interpretability for complex models: Some models are difficult to explain, which can be an issue in regulated environments.

Build the right AI approach for your business.

Talk with Webisoft to plan, validate, and deploy AI or machine learning!

What Is Generative AI?

Generative AI is a subset of machine learning that uses deep learning models to create new content in response to an input. Instead of assigning labels, scores, or predictions, these systems generate content by estimating what sequence, structure, or representation is most likely to follow a given input.

At scale, generative AI models learn the distribution of datasets like language, images, or code, then sample from it to create outputs new. This makes generative AI suitable for tasks where the goal is synthesis, drafting, or variation rather than deterministic decision making.

In practice, generative AI is used when systems need to interact through natural language or create content dynamically. It is also applied to unstructured information where traditional machine learning pipelines are slow to design or maintain.

How Generative AI Works

Generative AI follows a repeatable flow from training to prompt-based generation, with quality controlled through tuning and oversight.

- Select the target output and constraints: Teams define what the system must generate and what it must avoid, like policy violations or sensitive data exposure.

- Train the model on large datasets: The model learns patterns and structure at scale, which is why large language models and transformer-based approaches like encoder-decoder and self-attention dominate GenAI deployments.

- Use a generative architecture suited to the modality: Common families include transformers for language, diffusion for images, and GANs for synthetic generation, depending on the output type.

- Accept a prompt or query as the production input: The prompt becomes the control surface for the request, and the system interprets it as structured generation instructions.

- Generate an output by synthesizing from learned patterns: The system produces a response that resembles training examples in style and structure, but is assembled dynamically for the specific prompt.

- Tune quality using prompt design or fine-tuning: Real systems refine outputs through improved prompting and model customization, since raw generations can be inconsistent.

- Add human oversight for accuracy and safety: Research highlights trust and transparency issues, and points out cases where feedback errors or misconception reinforcement make human review necessary.

Key Benefits of Generative AI

Generative AI is valuable when you need fast creation, flexible language interaction, or outputs that operate on unstructured information.

- Content synthesis rather than classification: Generative AI produces complete responses, drafts, or artifacts by constructing outputs token by token, rather than selecting from predefined labels or categories.

- Strong handling of unstructured data: These models work directly with text, images, audio, and mixed formats, reducing the need for manual feature design or rigid data schemas.

- Flexible interaction through natural language: Open-ended prompts allow users to express intent in plain language, lowering the barrier for non-technical teams to interact with complex systems.

- Rapid first-draft generation: Generative AI accelerates creation by producing usable initial outputs that humans can refine, review, or correct instead of starting from scratch.

- Cross-domain pattern transfer: Models reuse learned structure across domains, enabling them to generalize concepts from one context to related tasks without task-specific retraining.

- High adaptability through prompting and tuning: System behavior can be adjusted through prompt design or targeted fine-tuning, avoiding full pipeline redesigns for many use cases.

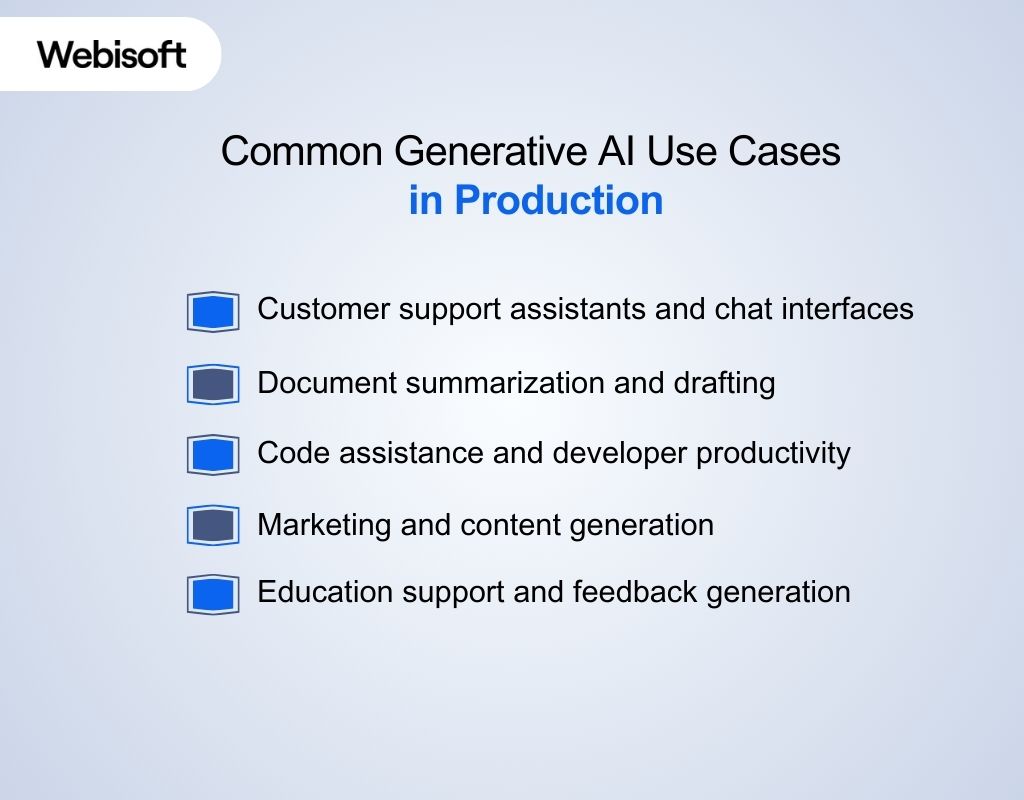

Common Generative AI Use Cases in Production

Generative AI is typically deployed where teams need to produce content, support conversational interaction, or synthesize information from large volumes of unstructured text.

Generative AI is typically deployed where teams need to produce content, support conversational interaction, or synthesize information from large volumes of unstructured text.

Customer support assistants and chat interfaces

Generative AI systems generate context-aware responses to customer queries, handle routine interactions, and assist human agents by drafting replies that follow conversational intent.

Document summarization and drafting

Models condense long documents into concise summaries and create initial drafts of reports, emails, or internal documentation, reducing manual reading and writing effort.

Code assistance and developer productivity

Generative AI helps developers by suggesting code snippets, completing functions, or explaining logic based on natural language prompts and surrounding code context.

Marketing and content generation

Systems generate multiple variations of marketing copy or creative content, allowing teams to review, refine, and select outputs aligned with brand guidelines.

Education support and feedback generation

Generative AI is applied to provide automated feedback, summarize learning progress, and support engagement, often combined with traditional machine learning for analytics and tracking.

Limitations and Risks of Generative AI

Generative AI can be effective, but production use requires explicit controls for quality, governance, and trust, consistent with guidance in NIST’s work on systems.

- Hallucinated outputs: Models can generate confident responses that are incorrect or unsupported by facts, which creates risk without validation.

- Lack of deterministic behavior: Identical inputs may produce different outputs, complicating testing and consistency in production systems.

- Weak grounding in source data: Generated responses are statistical in nature and may not link cleanly to verifiable sources.

- Copyright and intellectual property ambiguity: Ownership and reuse of generated content can be unclear in commercial settings.

- Bias inherited from training data: Outputs can reflect imbalances present in training datasets, requiring monitoring and controls.

Generative AI vs Machine Learning: The Core Conceptual Difference

Generative AI vs machine learning are often discussed together because generative AI is built on machine learning techniques, yet they solve different problem types. The difference between generative AI and machine learning become clear when comparing what each produces, how each learns, and how each fits workflows.

Generative AI vs machine learning are often discussed together because generative AI is built on machine learning techniques, yet they solve different problem types. The difference between generative AI and machine learning become clear when comparing what each produces, how each learns, and how each fits workflows.

Goal and primary output

- Generative AI: Designed to produce new content such as text, images, or code by constructing outputs that resemble patterns learned during training. The goal is creation rather than selection.

- Machine learning: Designed to produce predictions, classifications, rankings, or scores from existing data. The goal is to support decisions by estimating the most likely outcome.

What the system learns

- Generative AI: Learns the structure and distribution of data so it can generate plausible new samples that follow similar patterns. Even when the exact output has never appeared before.

- Machine learning: Learns relationships between inputs and known outcomes so it can generalize those relationships to new data and predict the correct result.

Type of data each system handles best

- Generative AI: Works best with unstructured data such as text, images, audio, or mixed formats where meaning and context matter more than fixed schemas.

- Machine learning: Works best with structured or semi structured data such as tables, metrics, logs, and labeled records where inputs are clearly defined.

How users interact with it

- Generative AI: Interaction is primarily prompt driven. Small changes in wording, context, or constraints can significantly affect the shape and detail of the output.

- Machine learning: Interaction is input driven. Outputs are determined by structured features and remain consistent as long as the input data and model stay the same.

Degree of output predictability

- Generative AI: Produces probabilistic outputs that may vary between runs, even for similar inputs, which introduces flexibility but reduces repeatability.

- Machine learning: Produces stable and repeatable outputs once trained, making behavior easier to test, validate, and monitor in production.

Success criteria and evaluation

- Generative AI: Success is measured by usefulness, coherence, and alignment with constraints, since there may be multiple acceptable outputs for a single input.

- Machine learning: Success is measured against a defined ground truth using metrics such as accuracy, precision, recall, or error rates.

Integration into business workflows

- Generative AI: Commonly integrated as an assistive layer that supports human work by drafting, summarizing, or transforming information.

- Machine learning: Commonly integrated as an automated decision layer that directly influences approvals, scoring, routing, or risk controls.

Best fit in real operations

- Generative AI: Best suited for tasks that involve synthesis, drafting, transformation, or natural language interaction at scale.

- Machine learning: Best suited for high volume, repeatable decisions where consistency and measurable accuracy are required.

Typical role inside enterprise systems

- Generative AI: Often sits closer to the user interface or content layer, translating human intent into generated outputs or suggested actions.

- Machine learning: Often sits deeper in the system stack, producing scores or predictions that drive workflows and policies.

Side by Side Comparison: Gen AI vs Machine Learning

The table below provides a quick reference view of how machine learning vs generative AI differ across core technical and operational dimensions. It includes generative AI vs machine learning examples reflected in common enterprise workflows.

| Dimension | Generative AI | Machine Learning |

| Core objective | Generate new content based on learned patterns | Predict, classify, rank, or score based on data |

| Output format | Text, images, code, audio, or mixed content | Labels, probabilities, numerical values |

| Output variability | Probabilistic and variable across runs | Stable and repeatable once trained |

| Data structure focus | Unstructured and semi-structured data | Structured and labeled data |

| Primary interaction method | Prompts and contextual input | Feature inputs and data pipelines |

| Evaluation approach | Usefulness, coherence, and alignment | Accuracy, precision, recall, error rates |

| Predictability level | Lower, due to sampling behavior | Higher, due to deterministic inference |

| Role in workflows | Assistive and content-driven | Decision-driven and rule-enforcing |

| Automation suitability | Human-in-the-loop preferred | Fully automated workflows common |

| Typical system layer | Interface or content layer | Decision or policy layer |

| Change control | Prompt tuning and constraint updates | Retraining and model versioning |

| Best suited problems | Drafting, synthesis, transformation | Forecasting, scoring, detection |

| Learning curve | Faster initial adoption through natural language interaction | Steeper learning curve due to data preparation and modeling |

| Ethical considerations | Higher risk of hallucination, bias amplification, and misuse | Risks center on bias, fairness, and outcome accountability |

When to Use Generative AI vs When to Use Machine Learning

Choosing between generative AI vs machine learning is not about what is “more advanced.” It is about picking the approach that matches your task, your data, your tolerance for variability, and your operational constraints like cost, latency, and auditability.

Choosing between generative AI vs machine learning is not about what is “more advanced.” It is about picking the approach that matches your task, your data, your tolerance for variability, and your operational constraints like cost, latency, and auditability.

Use Generative AI when

- The output must be created, not predicted: Use it when you need drafts, summaries, rewrites, explanations, or content variations rather than a numeric score or label.

- The core input is unstructured content: If the work lives in documents, PDFs, emails, knowledge bases, or free-text conversations, generative AI is usually the more natural fit.

- You need a natural-language interface for users: If people need to ask questions in plain English and get usable responses, generative AI is designed for that interaction style.

- Your success criteria is usefulness and alignment, not a single correct answer: For many content and support workflows, there are multiple acceptable outputs. Generative AI performs best when you can evaluate outputs by quality and constraints instead of strict ground truth.

- You can support human review or strong guardrails: Because generative outputs can vary and require governance, it fits best where review, grounding patterns, or policy constraints can be applied.

Use Machine Learning when

- You need consistent, repeatable decisions at scale: If your system must return the same kind of output every time, like an approval score, fraud probability, or churn risk, ML is typically the better tool.

- There is a clear target and measurable correctness: When you have labeled outcomes or objective metrics to optimize, ML’s training and evaluation workflow is a better match than open-ended generation.

- You are working mainly with structured data: Tables, transactional records, time series, and operational metrics are classic ML territory, and it is often simpler and cheaper than forcing a generative approach.

- Precision, explainability, or auditing is a hard requirement: For regulated or high-stakes decisions, ML often offers more stable evaluation and monitoring patterns than generative systems.

- Cost, latency, and long-term maintenance must stay predictable: Traditional ML models are often cheaper to run and easier to monitor than large generative systems, especially when the task is straightforward prediction or classification.

A quick sanity check before choosing

If you answer “yes” to these, you are usually in Generative AI territory: unstructured data, language-first interaction, multiple acceptable outputs. If you answer “yes” to these, you are usually in Machine Learning territory: structured data, known outcomes, measurable accuracy, repeatable decisions.

In applied research contexts like learning analytics, ML is still dominant for prediction tasks. While GenAI is emerging for feedback and adaptive support, with adoption concerns around feasibility and transparency.

And choosing between generative AI vs machine learning often comes down to execution, not theory. Explore how Webisoft helps teams design and deploy production-ready AI systems that balance accuracy, flexibility, and governance from day one.

Can Generative AI and Machine Learning Work Together?

Yes. Generative AI and machine learning can work together when each handles what it does best within the same system, rather than trying to replace one another. In practice, machine learning provides reliable prediction, scoring, and control signals.

While generative AI sits on top to interpret intent, generate content, or explain outcomes using natural language. This pattern improves usability without sacrificing consistency: ML enforces rules and accuracy, while GenAI adds flexibility and interaction.

Successful deployments separate responsibilities, add guardrails, and monitor both layers independently to manage risk and align them with governance, evaluation, and operational constraints.

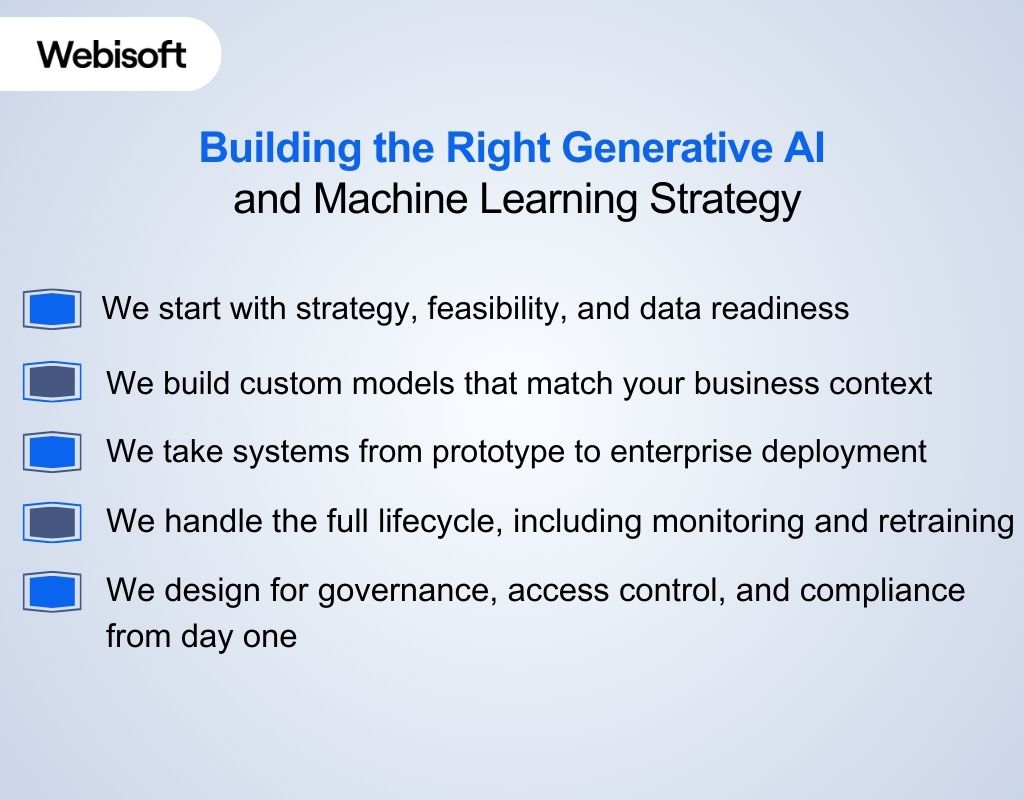

Building the Right Generative AI and Machine Learning Strategy

At this point, you know the tradeoffs between generative AI and machine learning and the cost of choosing wrong. At Webisoft, we help you pick the right approach, validate data readiness, and ship systems with security and compliance built in.

At this point, you know the tradeoffs between generative AI and machine learning and the cost of choosing wrong. At Webisoft, we help you pick the right approach, validate data readiness, and ship systems with security and compliance built in.

We start with strategy, feasibility, and data readiness

We begin by clarifying outcomes, checking whether your data can support them, and mapping the shortest path to a measurable win. Our AI strategy consultation focuses on where ML or GenAI fits your workflows, not what sounds trendy.

We build custom models that match your business context

Generic models miss the variables that matter in real operations, like customer behavior, policy rules, and industry constraints. We design models around your data, your risk posture, and the decisions your teams actually make every day.

We take systems from prototype to enterprise deployment

A working demo is not the finish line. We integrate AI into your existing systems and ship it with the foundations needed for real usage, including reliable deployment and operational practices.

We handle the full lifecycle, including monitoring and retraining

Models degrade when data shifts. We manage the ML lifecycle so performance stays visible, failures surface early, and retraining happens on a controlled cadence rather than after business impact.

We design for governance, access control, and compliance from day one

If you work with sensitive data, AI has to ship with guardrails. Our generative AI deployments include role based access controls and align with compliance expectations including GDPR HIPAA and SOC 2 customized to needs.

So ready to turn generative AI vs machine learning decisions into systems that actually work in production with confidence today? Contact Webisoft to discuss your use case, validate feasibility, and build a secure, governed solution aligned with your business goals.

Build the right AI approach for your business.

Talk with Webisoft to plan, validate, and deploy AI or machine learning!

Conclusion

Generative AI vs machine learning stops being confusing when intent takes priority over hype. Systems built to generate language behave very differently from systems designed to make decisions. Understanding that distinction early prevents unstable architectures, wasted effort, and tools that look impressive but fail under real-world pressure.

At Webisoft, we help teams move from comparison to execution. We design AI systems that match real business goals, balance flexibility with control, and hold up in production. The result is not just working AI, but systems you can trust, scale, and rely on long term.

Frequently Asked Question

Is generative AI machine learning?

Yes, generative AI is a form of machine learning, but it represents a specific subset rather than the entire field. It focuses on generating new content, while most machine learning systems are designed for prediction, classification, or scoring tasks.

Can generative AI replace traditional machine learning systems?

No. Generative AI is designed for content creation and interaction, not consistent decision making. Traditional machine learning remains essential for prediction, scoring, and automation where accuracy, repeatability, and measurable outcomes are required in production systems.

Is generative AI harder to secure than machine learning?

Yes. Generative AI introduces additional risks such as prompt injection, unintended data exposure, and unpredictable outputs. These risks require stronger guardrails, monitoring, and access controls than most traditional machine learning pipelines.

Should I learn machine learning or generative AI?

No, you should not choose blindly. Learning machine learning first builds strong foundations in data, modeling, and evaluation, while generative AI is easier later. Choose generative AI earlier only if your work centers on language, content systems, or interaction design.

Is generative AI deep learning?

Yes, generative AI relies on deep learning architectures such as transformers, but deep learning itself is broader. Many deep learning models perform prediction or classification without generating content, which places generative AI as an application rather than the whole field.