Guide to Machine Learning in the Cloud Environment

- BLOG

- Artificial Intelligence

- March 3, 2026

Training large machine learning models used to mean buying GPU servers, wiring networks, and babysitting hardware. Now? You open a cloud console, request compute, and start training. No forklifts. No server rooms. Just infrastructure on demand.

But the cloud is not a magic wand. Spin up too much compute and your bill explodes. Design poorly and your pipeline collapses. Deploy carelessly and your model drifts quietly in production.

That’s why this guide matters. You’ll learn how machine learning in the cloud actually works and how it differs from on-premise systems. You’ll also discover how to control costs and build scalable, production-ready ML systems that survive real-world pressure.

Contents

- 1 What Is Machine Learning in the Cloud?

- 2 How Machine Learning in Cloud Works

- 3 Build Scalable Cloud Machine Learning with Webisoft.

- 4 Machine Learning in the Cloud vs On-Premise

- 5 Major Cloud Platforms for Machine Learning

- 6 Real Business Use Cases of Machine Learning in the Cloud

- 7 Cost Engineering in Cloud-Based Machine Learning

- 8 Why Cloud Machine Learning Projects Fail

- 9 Future Trends in Machine Learning in the Cloud

- 10 Machine Learning in the Cloud: Implementation with Webisoft

- 11 Build Scalable Cloud Machine Learning with Webisoft.

- 12 Conclusion

- 13 Frequently Asked Question

What Is Machine Learning in the Cloud?

Machine learning in the cloud refers to designing, training, deploying, and operating machine learning models using cloud-hosted infrastructure and managed services instead of locally maintained servers.

Data is stored in cloud systems, training runs on scalable compute such as virtual machines or GPUs, and models deploy through managed endpoints or batch pipelines. The cloud provides elastic resources, automated scaling, and integrated tooling for experimentation, versioning, and monitoring.

Rather than investing in fixed hardware, organizations consume compute, storage, and machine learning services on demand. This enables teams to handle large datasets, distributed training jobs, and real-time inference without infrastructure constraints.

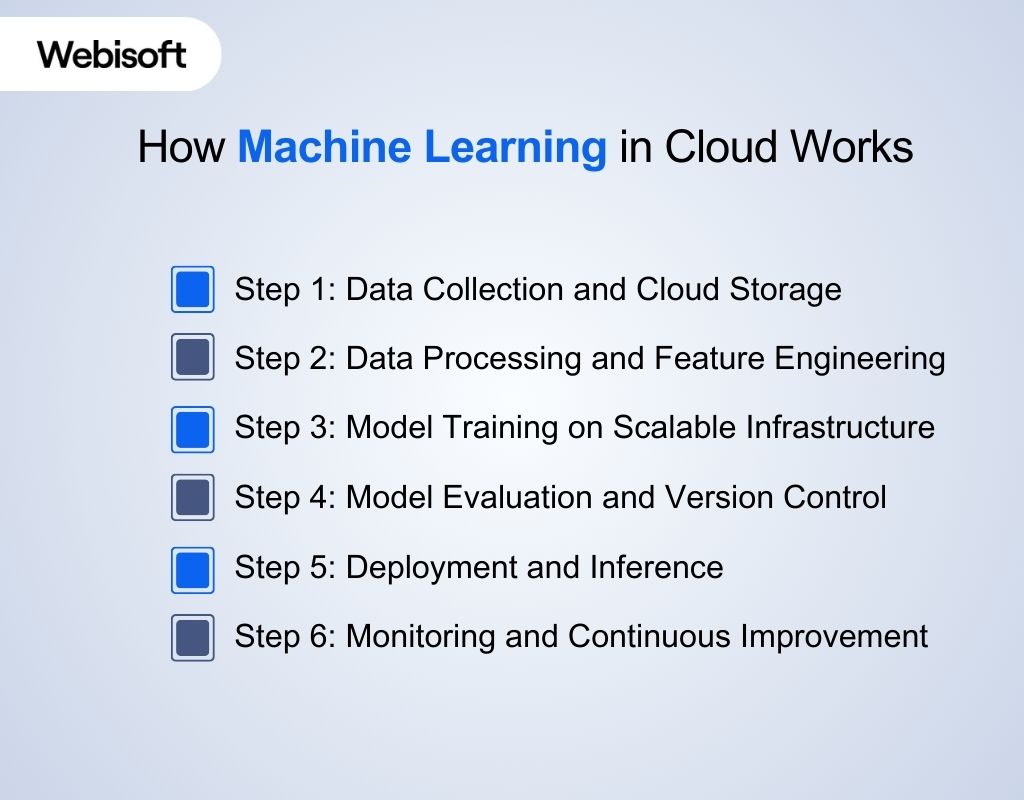

How Machine Learning in Cloud Works

After understanding what machine learning in cloud computing means, it is important to see how the lifecycle operates in practice. Cloud platforms structure the workflow into clear stages, each supported by scalable infrastructure and managed services.

After understanding what machine learning in cloud computing means, it is important to see how the lifecycle operates in practice. Cloud platforms structure the workflow into clear stages, each supported by scalable infrastructure and managed services.

Step 1: Data Collection and Cloud Storage

Data is first gathered from applications, databases, sensors, or third-party systems. It is then stored in cloud storage systems such as object storage, managed databases, or data warehouses. These systems provide durability, redundancy, and controlled access while keeping data centrally available for training and analysis.

Step 2: Data Processing and Feature Engineering

Raw data is cleaned, validated, and transformed into structured formats suitable for modeling. Cloud-based processing tools handle large datasets using distributed computing. Feature engineering pipelines extract meaningful patterns and variables that improve model performance and predictive accuracy.

Step 3: Model Training on Scalable Infrastructure

Training jobs run on virtual machines, GPU instances, or distributed clusters provisioned on demand. Cloud elasticity allows compute resources to scale up or down depending on dataset size and complexity. This reduces training time and removes hardware limitations.

Step 4: Model Evaluation and Version Control

Trained models are evaluated using validation datasets and performance metrics. Cloud experiment tracking systems record parameters, configurations, and results. Approved models are stored in a model registry, enabling structured version control and reproducibility.

Step 5: Deployment and Inference

Validated models are deployed to production through managed endpoints or batch pipelines. Real-time APIs generate predictions instantly, while batch inference processes large volumes of data periodically. Cloud auto-scaling adjusts resources based on demand.

Step 6: Monitoring and Continuous Improvement

After deployment, monitoring tools track accuracy, latency, and system health. Drift detection mechanisms identify performance degradation. When needed, retraining workflows are triggered to update the model, keeping it aligned with evolving data patterns.

Build Scalable Cloud Machine Learning with Webisoft.

Design, deploy, and scale production-ready ML systems confidently!

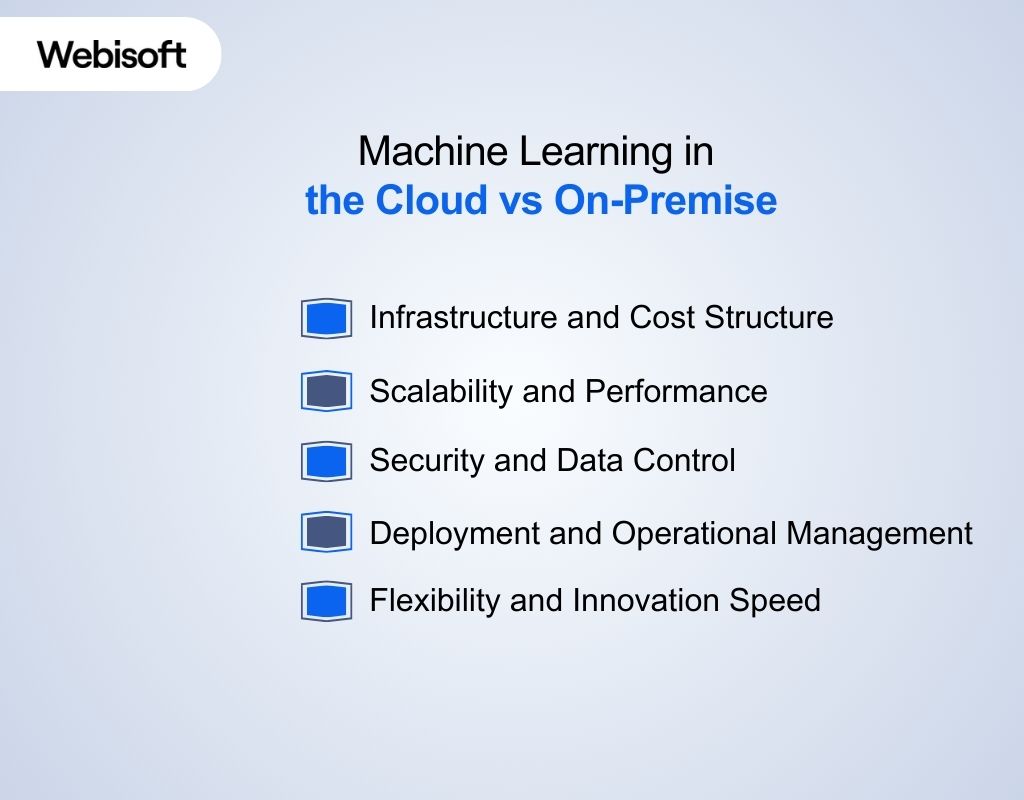

Machine Learning in the Cloud vs On-Premise

Selecting the right infrastructure for machine learning impacts cost, scalability, security, and long-term operational efficiency.

Selecting the right infrastructure for machine learning impacts cost, scalability, security, and long-term operational efficiency.

While both cloud and on-premise environments support model development and deployment, their architectural and management approaches differ significantly.

Infrastructure and Cost Structure

- Machine learning in cloud: Infrastructure operates on a usage-based model. Organizations pay for compute, storage, and networking as needed, shifting expenses from capital investment to operational spending while avoiding hardware procurement and maintenance.

- On-premise machine learning: Infrastructure requires upfront capital investment in servers, GPUs, networking equipment, and data center space. Ongoing maintenance, upgrades, and hardware lifecycle management remain the organization’s responsibility.

Scalability and Performance

- Machine learning in cloud: Compute resources scale elastically. Training clusters and inference endpoints expand or contract automatically based on workload demand, supporting large datasets and distributed training without hardware limitations.

- On-premise machine learning: Scaling depends on physical hardware availability. Expanding capacity requires purchasing and installing additional infrastructure, which can slow experimentation and limit rapid workload growth.

Security and Data Control

- Machine learning in cloud: Security relies on shared responsibility. Providers offer encryption, identity management, and compliance tooling, while organizations configure access controls and governance policies within the cloud environment.

- On-premise machine learning: Full control over physical infrastructure and network boundaries remains internal. This may suit organizations with strict data residency or regulatory constraints requiring isolated environments.

Deployment and Operational Management

- Machine learning in cloud: Managed services simplify deployment, monitoring, logging, and version control. Automation tools support continuous integration and model updates with reduced operational overhead.

- On-premise machine learning: Deployment pipelines, monitoring systems, and infrastructure automation must be built and maintained internally. This increases operational complexity but allows deeper customization.

Flexibility and Innovation Speed

- Machine learning in cloud: Teams can experiment quickly using pre-configured ML services, GPU instances, and managed pipelines. New environments can be provisioned within minutes, accelerating research and development cycles.

- On-premise machine learning: Experimentation speed depends on existing infrastructure capacity. Provisioning new environments or upgrading hardware may require longer planning cycles and procurement processes.

Major Cloud Platforms for Machine Learning

The effectiveness of machine learning in the cloud largely depends on the platform you choose. Major cloud providers offer purpose-built services, scalable infrastructure, and integrated tooling that directly influence performance, cost control, and deployment flexibility.

The effectiveness of machine learning in the cloud largely depends on the platform you choose. Major cloud providers offer purpose-built services, scalable infrastructure, and integrated tooling that directly influence performance, cost control, and deployment flexibility.

Amazon Web Services (AWS)

AWS provides one of the most mature machine learning ecosystems. Machine learning AWS capabilities are primarily delivered through Amazon SageMaker, a managed platform for building, training, and deploying models at scale.

It supports managed notebooks, distributed training, automated model tuning, and scalable inference endpoints. AWS also integrates tightly with data services such as S3, Redshift, and Kinesis, enabling complete data pipelines from ingestion to prediction.

Its broad infrastructure footprint and global availability make it suitable for both startups and enterprises requiring flexibility and scalability.

Microsoft Azure Machine Learning

Azure Machine Learning is designed with strong enterprise integration in mind. It connects seamlessly with Microsoft tools such as Power BI, Azure Data Factory, and enterprise identity systems. Azure supports both managed ML workflows and customizable environments using containers and Kubernetes. It is often chosen by organizations already operating within Microsoft ecosystems or requiring hybrid cloud deployments.

Google Cloud Platform (GCP)

Google Cloud focuses heavily on data-centric machine learning, offering the Google Cloud Computing Foundations necessary for robust AI infrastructure. Vertex AI unifies model training, deployment, and monitoring within a managed environment.

GCP is known for its strength in data analytics and AI research integration. Services such as BigQuery and Tensor Processing Units support large-scale training and advanced experimentation. It is frequently selected for data-intensive and research-driven workloads.

How to Choose the Right Platform

Choosing the right cloud platform requires aligning infrastructure capabilities with business and technical priorities. The decision should be guided by structured evaluation criteria:

- Workload characteristics: Match the platform to training intensity, real-time inference demands, and data volume requirements.

- Regulatory and governance constraints: Ensure the platform supports required compliance standards and data residency controls.

- Existing technology ecosystem: Consider compatibility with current enterprise systems, analytics tools, and identity management frameworks.

- Scalability and performance needs: Evaluate elasticity, distributed training support, and global infrastructure reach.

- Team expertise and operational maturity: Align with platforms where internal skills and DevOps practices are strongest.

Choosing the right platform is only the beginning; execution determines long-term success. You can plan, build, and scale your cloud machine learning systems with Webisoft’s AI engineering expertise to ensure performance, security, and measurable business results.

Real Business Use Cases of Machine Learning in the Cloud

AI and machine learning in the cloud delivers measurable impact across industries by combining scalable infrastructure with data-driven decision systems. Organizations use cloud environments to operationalize predictive models, automate analysis, and support real-time intelligence at enterprise scale.

AI and machine learning in the cloud delivers measurable impact across industries by combining scalable infrastructure with data-driven decision systems. Organizations use cloud environments to operationalize predictive models, automate analysis, and support real-time intelligence at enterprise scale.

Fraud Detection and Risk Scoring

Financial institutions use cloud-based machine learning to detect fraudulent transactions in real time. Models analyze transaction history, device behavior, geolocation, and spending patterns to flag anomalies within milliseconds.

Cloud infrastructure enables high-throughput processing, allowing millions of transactions to be evaluated simultaneously. Elastic scaling ensures systems remain responsive during peak activity while maintaining low latency for approval workflows.

Predictive Maintenance in Manufacturing and IoT

Manufacturers deploy machine learning models in the cloud to predict equipment failures before they occur. Sensor data from machines is streamed to cloud storage and analyzed to identify abnormal vibration, temperature, or performance patterns.

By forecasting maintenance needs, organizations reduce downtime, extend asset life, and optimize spare parts inventory. Cloud compute supports large-scale processing of continuous IoT data streams.

Personalization and Recommendation Systems

Retail and digital platforms rely on cloud-hosted models to personalize user experiences. Recommendation engines analyze browsing history, purchase behavior, and engagement metrics to generate tailored product suggestions.

The cloud supports large datasets and dynamic retraining, ensuring recommendations adapt to evolving customer preferences. Real-time inference endpoints deliver personalized results instantly during user sessions.

Natural Language Processing and Document Intelligence

Organizations use cloud-based machine learning for text classification, sentiment analysis, chatbot automation, and document extraction. NLP models process customer feedback, emails, contracts, and support tickets at scale.

Cloud services provide distributed training and scalable APIs for handling high volumes of text data. This enables faster decision-making and automation of manual review processes.

Computer Vision and Image Analytics

Cloud machine learning supports image recognition, object detection, and quality inspection systems. Industries such as healthcare, retail, and logistics analyze images to identify defects, detect anomalies, or classify visual data.

GPU-enabled infrastructure accelerates model training and inference, while cloud storage manages large image datasets efficiently. These systems operate at scale without requiring specialized on-premise hardware.

Customer Churn Prediction and Behavioral Analytics

Subscription-based businesses use predictive models to identify customers at risk of leaving. Cloud-hosted machine learning analyzes engagement patterns, service usage, and historical churn signals to assign risk scores.

Organizations then trigger targeted retention strategies based on model outputs. Scalable cloud infrastructure allows retraining models regularly as user behavior changes.

Cost Engineering in Cloud-Based Machine Learning

Cloud computing for machine learning offers flexibility, but costs can escalate without discipline. Managing cost is not about cutting corners. It is about aligning compute, storage, and deployment decisions with actual workload demand.

Cloud computing for machine learning offers flexibility, but costs can escalate without discipline. Managing cost is not about cutting corners. It is about aligning compute, storage, and deployment decisions with actual workload demand.

Training Efficiency

Model training is often the biggest cost driver, especially with GPUs. Oversized instances and long-running experiments waste resources. Right-sizing compute and stopping idle jobs keeps spending controlled.

Inference Cost Management

Production models create ongoing expenses. Real-time endpoints that remain active during low traffic periods can drive unnecessary charges. Auto-scaling reduces this risk by adjusting capacity based on demand. For predictable workloads, batch inference can significantly lower cost compared to always-on APIs.

Storage Discipline

Training data, logs, and model artifacts grow continuously. Not all of it needs premium storage. Frequently used datasets should remain in high-performance tiers, while older artifacts can move to lower-cost storage. Controlled data transfer between regions also prevents avoidable network expenses.

Visibility and Governance

Cost problems often stem from lack of visibility. Monitoring resource utilization helps detect idle endpoints and underused clusters. Budget alerts and usage policies prevent uncontrolled experimentation from inflating monthly bills.

Architectural Impact

Design choices shape long-term cost. Serverless inference reduces idle overhead for unpredictable traffic. Container-based deployments improve resource utilization. Managed services may appear higher in direct pricing, but they often reduce operational burden and hidden infrastructure maintenance costs.

Why Cloud Machine Learning Projects Fail

Even with scalable infrastructure and advanced tooling, many cloud-based machine learning initiatives fail to deliver expected outcomes.

Failures rarely stem from algorithms alone. They usually result from operational gaps, poor governance, and unrealistic implementation assumptions.

- Unclear Business Objectives: Projects often begin without clearly defined success metrics or measurable KPIs. Without alignment between model outputs and business value, even technically accurate models fail to create impact.

- Poor Data Quality: Inconsistent, biased, or incomplete data leads to unreliable predictions. Cloud scalability cannot compensate for flawed datasets or weak feature engineering practices.

- Overestimated Infrastructure Assumptions: Teams assume elastic cloud resources automatically guarantee performance. Without proper architecture planning, systems face latency issues, cost overruns, or scaling bottlenecks.

- Lack of Monitoring and Drift Detection: Models degrade over time as data patterns shift. Without continuous monitoring, performance declines silently, affecting decision quality before teams notice.

- Weak Governance and Access Controls: Insufficient role management and audit mechanisms create security vulnerabilities and compliance risks, especially in regulated industries.

- Vendor Lock-In Constraints: Heavy dependence on proprietary services can limit portability. Migrating workloads later becomes complex and expensive.

- Skill Gaps and Operational Immaturity: Successful cloud machine learning requires expertise in infrastructure, data engineering, and lifecycle management. Lack of internal capability slows deployment and increases failure risk.

- Cost Mismanagement: Uncontrolled experimentation, idle compute instances, and misconfigured endpoints inflate cloud bills, forcing projects to be scaled back prematurely.

Future Trends in Machine Learning in the Cloud

After examining the risks and operational realities of cloud-based ML systems, it is important to look ahead. The future of machine learning in cloud is shaped by advancing infrastructure models, stricter governance demands, and increasingly large-scale AI workloads.

- Rapid Market Expansion: The global cloud machine learning market is valued at approximately USD 17.56 billion in 2026. It is projected to grow at a CAGR of about 33.6% through 2035. This reflects intensifying demand for scalable, service-oriented ML solutions across industries.

- Cloud Infrastructure Growth: Global cloud infrastructure spending reached about USD 90.9 billion in Q1 2025, growing 21% year over year, with continued strong ecosystem momentum. This growth underpins machine learning capacity and supports broader adoption of ML workflows in the cloud.

- Shift Toward Hybrid and Sovereign Cloud: Investment in sovereign and hybrid cloud infrastructure is rising sharply due to compliance and data residency requirements. Major regions like Europe are expanding local cloud capabilities, shaping how machine learning workloads are hosted and governed.

- Advanced Deployment Patterns: Machine learning systems are moving beyond traditional training and inference toward distributed and edge-augmented models. Edge computing integrations and heterogeneous compute strategies improve latency and efficiency for real-time AI scenarios.

- AI Governance and Responsible ML: As adoption scales, frameworks for bias mitigation, explainability, and regulatory compliance are becoming integral to cloud ML pipelines. Enterprise demand for transparency and auditability is reshaping how models are built and deployed.

- MLOps and Lifecycle Automation: Enterprise focus is shifting to strong machine learning operations (MLOps) that streamline lifecycle management, version control, retraining, and monitoring. This trend emphasizes reliability and repeatability for production systems.

Machine Learning in the Cloud: Implementation with Webisoft

Cloud machine learning only creates value when architecture, cost control, and operational discipline work together. That requires more than model training. It requires deliberate system design.

Cloud machine learning only creates value when architecture, cost control, and operational discipline work together. That requires more than model training. It requires deliberate system design.

At Webisoft, we implement machine learning in cloud as production infrastructure, not experimentation environments.

Business-Aligned Model Strategy

Before touching infrastructure, we define what success looks like. Every system we build ties directly to a measurable business outcome. We clarify:

- The operational decision the model will influence

- The KPI it must improve

- The acceptable error threshold

- The expected ROI timeline

This prevents technically impressive systems that deliver no real impact.

Cloud Architecture Designed for Scale

We design complete pipelines, not isolated models. That includes ingestion, processing, training, deployment, and monitoring layers. Our architecture planning includes:

- Distributed training where it is justified

- GPU allocation based on workload intensity

- Storage tier mapping for cost efficiency

- Secure networking and access controls

The result is infrastructure that scales predictably instead of reactively.

Production Deployment That Holds Under Load

A model must survive production traffic, not just test datasets. We implement structured deployment processes that remove guesswork. This includes:

- Containerized model packaging

- Managed inference endpoints

- Auto-scaling thresholds

- Real-time logging and health checks

Deployment is treated as an engineering discipline, not a final step.

Cost-Controlled Infrastructure from Day One

Cloud flexibility can inflate budgets without visibility. We embed cost awareness into the system design phase. We actively manage:

- Instance sizing and workload matching

- Idle compute elimination

- Batch vs real-time inference decisions

- Storage lifecycle rules

Performance is optimized without uncontrolled spending.

Continuous Monitoring and Model Stability

Production systems change as data changes. Stability requires structured oversight. We implement:

- Accuracy monitoring dashboards

- Drift detection triggers

- Version-controlled retraining workflows

- Rollback mechanisms when performance declines

This ensures the model remains reliable over time.

Integration Into Your Existing Ecosystem

Machine learning should operate within your operational systems, not outside them. We integrate with:

- Enterprise APIs

- Data warehouses

- Business intelligence dashboards

- Internal tools and workflows

Outputs become actionable insights, not isolated predictions. Building scalable pipelines, controlling cloud costs, and maintaining model stability require deliberate engineering, not trial and error.

Continue the conversation through the Webisoft contact page, and let’s design a cloud machine learning system that fits your infrastructure, data maturity, and growth plans.

Build Scalable Cloud Machine Learning with Webisoft.

Design, deploy, and scale production-ready ML systems confidently!

Conclusion

In the end, machine learning in the cloud is not about infrastructure access. It is about building intelligent systems that remain reliable, scalable, and economically sustainable over time.

The organizations that succeed are not the ones with the most tools, but the ones with disciplined architecture and operational clarity. That level of execution requires more than experimentation.

At Webisoft, we design and deploy cloud-based ML systems that perform under pressure and deliver measurable results. When you are ready to implement machine learning in the cloud with precision, we are ready to build it right.

Frequently Asked Question

Can startups use machine learning in cloud?

Yes. Cloud platforms give startups access to scalable compute, managed ML services, and GPU resources without heavy upfront investment.

This allows early-stage teams to experiment, iterate, and deploy models quickly while keeping infrastructure costs aligned with growth.

How secure is machine learning in cloud?

Cloud providers offer built-in security features such as encryption, identity and access management, and compliance certifications.

However, overall security depends on proper configuration, governance policies, and continuous monitoring of data, models, and infrastructure.

What industries benefit most from cloud ML?

Industries handling large volumes of data gain significant value from cloud ML. Finance, healthcare, retail, manufacturing, logistics, and SaaS companies use cloud-based models for fraud detection, predictive maintenance, personalization, automation, and operational forecasting.