Machine Learning in Networking: Concepts and Uses

- BLOG

- Artificial Intelligence

- March 3, 2026

Modern networks operate at machine speed, yet most monitoring still reacts like it’s 2005. When traffic patterns shift or congestion builds, manual rules struggle to keep up. Machine learning in networking changes that equation.

Instead of waiting for thresholds to break, learning systems detect patterns, correlations, and early anomalies hidden inside complex telemetry streams.

As a result, networks move from reactive troubleshooting to intelligent anticipation. But how does that shift actually work inside live environments?

In the sections ahead, we break down the models, architectures, and real-world use cases that turn raw network data into adaptive, production-ready intelligence.

Contents

- 1 What is Machine Learning in Networking?

- 2 Why Modern Network Architectures Depend on Machine Learning

- 2.1 Software-Defined and Cloud-Native Networks Introduce Continuous Change

- 2.2 Encrypted and East-West Traffic Reduces Traditional Visibility

- 2.3 High-Dimensional Telemetry Exceeds Human Analysis Capacity

- 2.4 Dynamic Traffic Patterns Break Static Threshold Models

- 2.5 Real-Time Service Expectations Require Predictive Intelligence

- 3 Key Applications of Machine Learning in Networking

- 4 Machine Learning Models Used in Networking Systems

- 4.1 Supervised Learning Models for Network Classification and Detection

- 4.2 Unsupervised Learning for Network Anomaly Discovery

- 4.3 Time-Series Models for Network Forecasting

- 4.4 Graph-Based Learning for Topology-Aware Networking

- 4.5 Reinforcement Learning for Adaptive Network Control

- 4.6 Hybrid and Ensemble Models in Networking

- 5 Engineer Intelligent Networks That Think Ahead.

- 6 Data Pipeline and Deployment Architecture for Machine Learning in Networking

- 7 Benefits of Machine Learning in Networking Projects

- 8 Challenges of Using Machine Learning in Networking

- 8.1 Data Quality and Labeling Difficulties

- 8.2 Lack of Representative and Diverse Training Data

- 8.3 Concept and Model Drift

- 8.4 Explainability and Trust Issues

- 8.5 High Computational and Operational Cost

- 8.6 Performance Constraints in Real-Time Networks

- 8.7 Integration and Organizational Challenges

- 8.8 Security and Adversarial Risks

- 9 How to Start Implementing Machine Learning in Networking

- 9.1 Define Clear Networking Objectives

- 9.2 Prepare and Inventory Network Data

- 9.3 Select Initial Use Cases with Low Risk and High Value

- 9.4 Choose Suitable Machine Learning Techniques

- 9.5 Develop and Validate Models

- 9.6 Integrate with Monitoring and Operations Systems

- 9.7 Establish Feedback and Refinement Loops

- 9.8 Scale Beyond Pilot Projects

- 10 How Webisoft Helps Organizations Apply ML in Networking

- 10.1 We Understand Your Networking Objectives First

- 10.2 We Build Networking-Specific Data Foundations

- 10.3 We Develop Models Aligned with Real Network Behavior

- 10.4 We Deploy Within Live Networking Environments Safely

- 10.5 We Continuously Adapt as Your Network Evolves

- 10.6 We Deliver Long-Term Networking Intelligence

- 11 Engineer Intelligent Networks That Think Ahead.

- 12 Conclusion

- 13 Frequently Asked Question

What is Machine Learning in Networking?

Machine learning in networking is the application of learning algorithms to network-generated data such as traffic flows, device telemetry, routing updates, and performance metrics.

These algorithms analyze patterns in large volumes of dynamic network data and generate predictions, classifications, or anomaly signals without relying solely on predefined rules.

Rather than reacting to fixed thresholds, ML-driven systems continuously learn from network behavior. This allows them to interpret complex traffic patterns, detect deviations from normal activity, and support more adaptive network management.

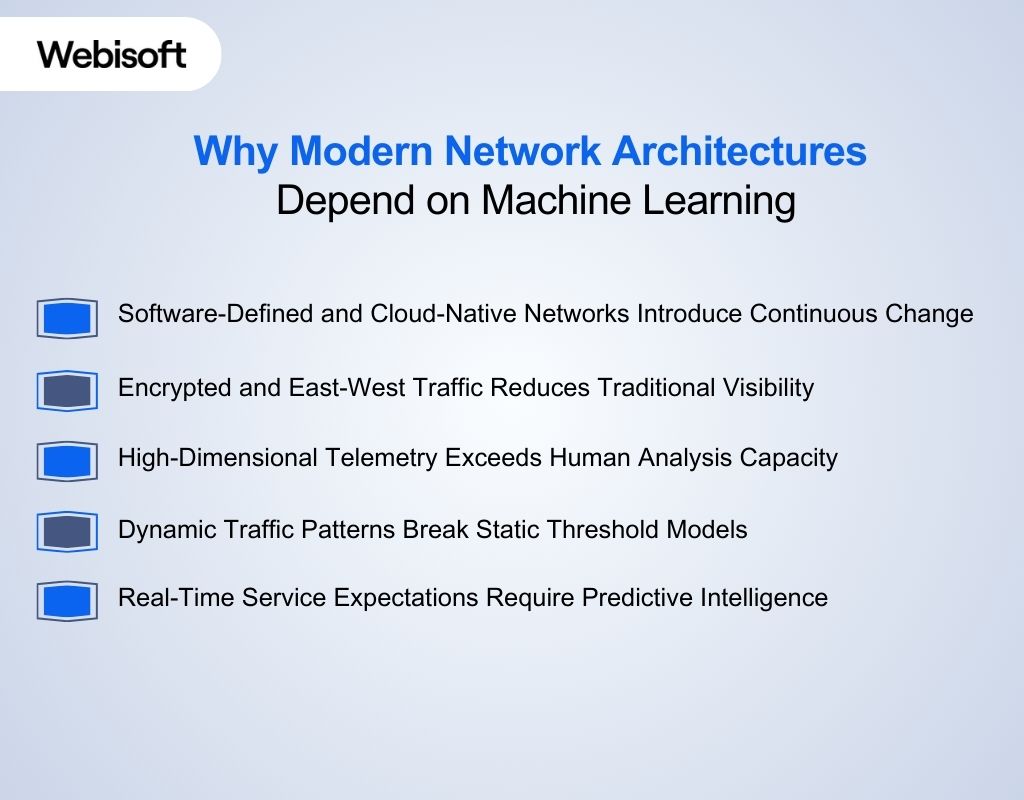

Why Modern Network Architectures Depend on Machine Learning

Modern network environments are distributed, software-defined, encrypted, and continuously evolving. Static configurations and threshold-based monitoring cannot respond fast enough to shifting traffic flows, topology changes, and workload variability. Machine learning enables adaptive analysis and scalable decision support.

Modern network environments are distributed, software-defined, encrypted, and continuously evolving. Static configurations and threshold-based monitoring cannot respond fast enough to shifting traffic flows, topology changes, and workload variability. Machine learning enables adaptive analysis and scalable decision support.

Software-Defined and Cloud-Native Networks Introduce Continuous Change

SDN, virtualization, containers, and hybrid cloud infrastructures constantly modify routing paths, resource allocation, and traffic behavior.

Manual rule updates struggle to keep pace. Machine learning adapts to these shifting patterns and helps maintain operational consistency without constant reconfiguration.

Encrypted and East-West Traffic Reduces Traditional Visibility

Widespread encryption and microservices-based communication limit deep packet inspection. Payload visibility is no longer reliable because of the perimeter-based monitoring.

Modern architectures depend on behavioral analysis of flow metadata, timing, and statistical patterns, which machine learning can interpret more effectively.

High-Dimensional Telemetry Exceeds Human Analysis Capacity

Streaming telemetry, logs, and performance metrics generate large, multi-variable datasets across distributed environments.

Traditional monitoring relies on isolated alerts and fixed thresholds. Machine learning identifies correlations across metrics and detects subtle deviations that manual analysis often misses.

Dynamic Traffic Patterns Break Static Threshold Models

Autoscaling workloads, global user bases, and edge computing create unpredictable traffic shifts. Fixed baselines either trigger excessive alerts or fail to capture emerging congestion risks. Machine learning establishes adaptive baselines that evolve with real-time network behavior.

Real-Time Service Expectations Require Predictive Intelligence

Modern enterprises operate under strict uptime and latency requirements. Reactive monitoring detects issues after service degradation begins.

Network architectures increasingly depend on predictive models to anticipate saturation, routing instability, and performance anomalies before they affect users.

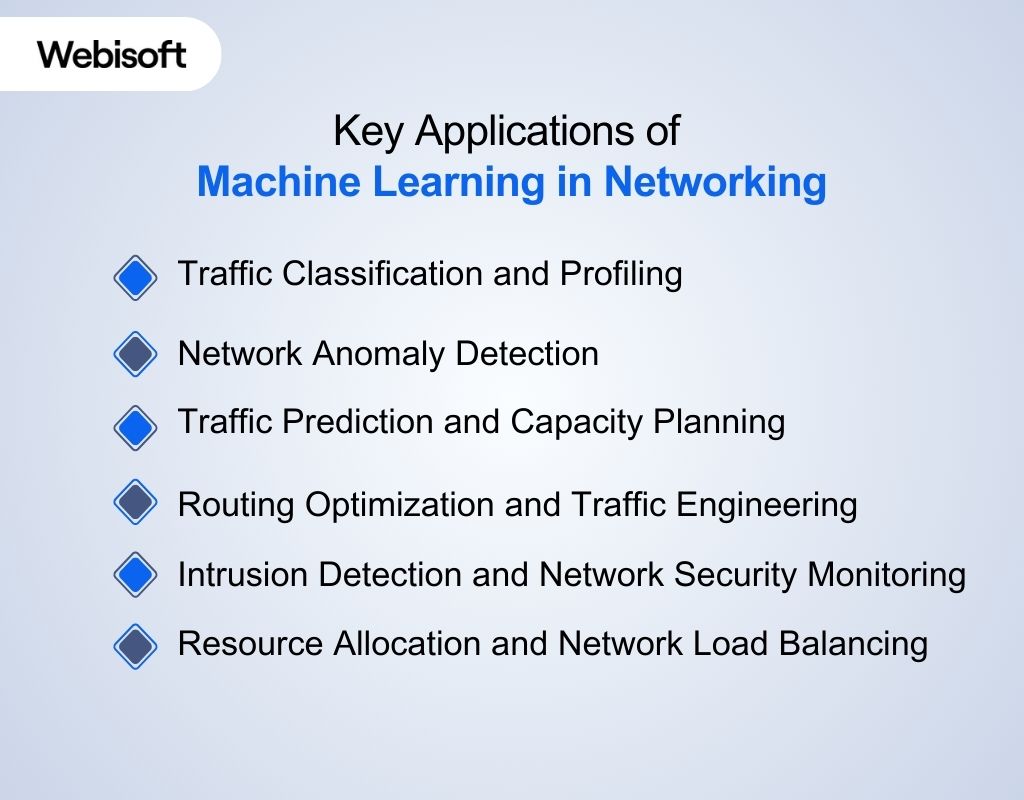

Key Applications of Machine Learning in Networking

Modern networks generate large volumes of behavioral, performance, and routing data. Machine learning turns this raw data into structured intelligence that supports operational visibility, predictive planning, and adaptive network control across distributed infrastructures.

Modern networks generate large volumes of behavioral, performance, and routing data. Machine learning turns this raw data into structured intelligence that supports operational visibility, predictive planning, and adaptive network control across distributed infrastructures.

Traffic Classification and Profiling

Machine learning enables identification of application types and communication patterns by analyzing statistical characteristics of network flows.

- Uses flow-level features such as packet timing, size distribution, and session duration

- Helps enforce application-aware routing and bandwidth policies

- Improves visibility into shadow IT and unmanaged traffic

Network Anomaly Detection

ML models establish behavioral baselines across interfaces, flows, or devices and detect deviations that indicate faults or abnormal activity. This approach supports early issue discovery in complex environments.

- Identifies subtle deviations across multiple correlated metrics

- Detects misconfigurations and sudden traffic spikes

- Flags abnormal behavior without relying on predefined thresholds

Traffic Prediction and Capacity Planning

Machine learning analyzes historical traffic trends to estimate future demand and utilization patterns. This helps organizations prepare infrastructure changes before service degradation occurs.

- Models recurring usage cycles and growth trends

- Estimates link saturation timelines

- Supports informed decisions on scaling and upgrades

Routing Optimization and Traffic Engineering

ML supports adaptive decision-making in traffic distribution by learning from previous congestion patterns and route performance metrics.

- Assists in dynamic load balancing across paths

- Improves path selection under variable demand

- Supports policy-driven optimization strategies

Intrusion Detection and Network Security Monitoring

Machine learning enhances network security monitoring by analyzing traffic behavior rather than relying only on known threat signatures.

- Detects previously unseen attack patterns

- Identifies lateral movement across internal segments

- Prioritizes suspicious flows based on anomaly scores

Resource Allocation and Network Load Balancing

Machine learning enables dynamic allocation of network resources based on traffic demand, usage behavior, and infrastructure conditions.

Instead of relying on static configurations, ML-driven systems adjust bandwidth distribution and path utilization in response to real-time conditions.

- Allocates bandwidth based on traffic priority and demand patterns

- Supports intelligent load balancing across distributed nodes

- Reduces overutilization on critical links

- Improves overall network efficiency under fluctuating workloads

Machine Learning Models Used in Networking Systems

Machine learning models provide the analytical backbone for tasks such as classification, prediction, and anomaly detection in modern networks.

Machine learning models provide the analytical backbone for tasks such as classification, prediction, and anomaly detection in modern networks.

Different ML model families are suited to distinct types of networking data and problem characteristics, enabling tailored analysis and decision support.

Supervised Learning Models for Network Classification and Detection

Supervised models are widely used in networking when labeled datasets are available, such as known attack signatures, categorized traffic types, or documented failure events.

- Random Forest and Decision Trees are commonly applied to flow-based traffic classification and intrusion detection due to interpretability and fast inference.

- Support Vector Machines (SVM) are used for separating normal and abnormal network sessions in high-dimensional flow datasets.

- Gradient Boosting Models improve detection accuracy in network anomaly and threat identification tasks where feature relationships are complex.

These models are practical for environments where historical labeled network events exist.

Unsupervised Learning for Network Anomaly Discovery

In many networking scenarios, labeled incident data is limited. Unsupervised models help detect unknown or emerging behaviors without predefined categories.

- K-Means and Clustering Algorithms group similar flow patterns to identify outliers in traffic behavior.

- Isolation Forest isolates rare or abnormal network sessions in large-scale telemetry datasets.

- Autoencoders learn normal traffic representations and flag deviations through reconstruction error.

These models are especially useful for zero-day anomaly detection and behavioral monitoring.

Time-Series Models for Network Forecasting

Network performance metrics such as bandwidth utilization, packet loss, and latency are time-dependent. Time-series models are designed to capture temporal structure.

- ARIMA and statistical forecasting models are used for bandwidth trend estimation in capacity planning.

- LSTM and recurrent neural networks capture sequential dependencies in traffic flows and performance fluctuations.

- Temporal convolution models analyze high-frequency telemetry for short-term congestion prediction.

These models support proactive infrastructure planning and resource scaling.

Graph-Based Learning for Topology-Aware Networking

Networks naturally form graph structures consisting of nodes and links. Graph-based models leverage this topology directly.

- Graph Neural Networks (GNNs) model relationships between routers, switches, and endpoints to detect structural anomalies.

- Graph embeddings represent network topology changes for routing stability analysis.

- These models support topology-aware failure detection and intelligent routing decisions.

Graph learning is increasingly important in SDN and data center environments.

Reinforcement Learning for Adaptive Network Control

Reinforcement learning is applied when networking systems must learn optimal control policies under changing conditions.

- Used in adaptive routing and traffic engineering.

- Learn congestion-aware path selection strategies.

- Optimizes multi-objective metrics such as latency, throughput, and fairness.

These models are suitable for environments where static routing policies cannot adapt quickly enough.

Hybrid and Ensemble Models in Networking

Large-scale network environments often combine multiple model types to improve reliability and reduce false positives.

- Ensemble models combine supervised and unsupervised outputs for more stable anomaly detection.

- Hybrid architectures integrate statistical baselines with deep learning for strong monitoring.

- Layered detection systems improve operational trust and interpretability.

Such combinations are common in enterprise-grade networking systems.

Engineer Intelligent Networks That Think Ahead.

Design, deploy, and scale ML-driven networking with Webisoft experts!

Data Pipeline and Deployment Architecture for Machine Learning in Networking

AI and machine learning in networking depends on a continuous flow of telemetry, structured transformation of network signals, and tightly controlled deployment environments.

AI and machine learning in networking depends on a continuous flow of telemetry, structured transformation of network signals, and tightly controlled deployment environments.

Unlike generic ML systems, networking pipelines must operate under real-time constraints and integrate directly with operational infrastructure.

1. Network Signal Acquisition

The pipeline begins inside the network itself. Routers, switches, firewalls, and controllers emit flow records, telemetry streams, routing updates, and performance counters.

These signals are collected either through streaming telemetry protocols or centralized collectors. The goal at this stage is reliability and completeness.

Packet drops in the data pipeline can distort model behavior. Time synchronization across devices is equally critical, since many networking models rely on temporal correlations.

2. Stream Processing and Feature Construction

Raw network data is rarely model-ready. It must be aggregated, normalized, and transformed into structured representations. This stage may include:

- Converting raw flow records into time-windowed summaries

- Extracting statistical indicators such as variance, entropy, or rate of change

- Mapping network entities into structured identifiers

- Aligning multi-source telemetry into unified timelines

Feature construction determines how effectively the model can interpret network behavior.

3. Model Training Environment

Training typically occurs on historical network data stored in centralized repositories. This environment must isolate experimental models from live operations. Key considerations include:

- Separating training datasets from live inference streams

- Validating model performance against operational baselines

- Testing models against historical incident patterns

- Evaluating strongness under simulated traffic variability

In networking, models must generalize across changing topologies and workload shifts.

4. Controlled Model Deployment

Deployment in networking environments cannot be purely experimental. Models must be introduced gradually and monitored carefully. Common approaches include:

- Deploying models in shadow mode before enabling decisions

- Integrating predictions into monitoring dashboards first

- Gradually activating automated responses

- Maintaining fallback mechanisms to traditional control logic

This ensures operational stability while introducing adaptive intelligence.

5. Real-Time Inference Layer

Once deployed, models operate on incoming telemetry streams. Inference may occur centrally in a data center or closer to the network edge, depending on latency requirements. Deployment location is influenced by:

- Required response time

- Bandwidth constraints

- Device compute capacity

- Security policies

Networking ML systems often combine centralized analysis with localized decision support.

6. Continuous Monitoring and Model Governance

Network behavior evolves due to infrastructure changes, policy updates, or traffic growth. Without oversight, model accuracy can degrade. A mature deployment includes:

- Performance monitoring of prediction accuracy

- Drift detection in input distributions

- Version control for model updates

- Periodic retraining using recent data

This governance layer ensures that machine learning remains aligned with operational reality.

Benefits of Machine Learning in Networking Projects

Modern networking projects operate in environments where scale, variability, and performance expectations are constantly increasing.

Machine learning introduces adaptive intelligence that improves reliability, efficiency, and long-term operational sustainability across distributed network infrastructures.

- Reduced Network Downtime: Machine learning identifies early warning patterns in performance metrics, allowing teams to address emerging issues before they escalate into outages or service disruptions.

- Faster Incident Resolution: Automated pattern recognition shortens the time required to detect and isolate abnormal behavior, improving mean time to detect and mean time to resolve.

- Improved Operational Efficiency: By automating analysis of large telemetry datasets, ML reduces manual troubleshooting efforts and minimizes repetitive monitoring tasks.

- Better Infrastructure Utilization: ML-driven insights help balance workloads and distribute resource usage more effectively, preventing underutilization and avoiding unnecessary infrastructure expansion.

- Higher Network Stability: Adaptive learning models adjust to changing network conditions, reducing instability caused by static configurations in dynamic environments.

- Stronger Security Resilience: Behavioral modeling enhances the ability to recognize suspicious activity patterns, improving protection against evolving network threats.

- Scalable Decision-Making: ML systems process data volumes that exceed human capacity, enabling consistent decision support across multi-site and hybrid network deployments.

- Data-Driven Strategic Planning: Predictive insights derived from network behavior support informed long-term investment, scaling, and architecture planning decisions.

Challenges of Using Machine Learning in Networking

Machine learning offers powerful automation and insights for modern networks, but integrating it into real-world networking systems brings unique obstacles.

Machine learning offers powerful automation and insights for modern networks, but integrating it into real-world networking systems brings unique obstacles.

These challenges arise from data limitations, evolving network conditions, model reliability concerns, and organizational constraints.

Data Quality and Labeling Difficulties

High-quality, representative, and labeled network data is rare. Network telemetry often lacks standardized labeling, making it hard to train supervised ML models that require ground truth datasets. This slows model development and reduces accuracy.

Lack of Representative and Diverse Training Data

Networks generate highly variable patterns across sites, users, and traffic types. Models trained on one environment may not generalize to another, causing poor performance when applied to unseen scenarios or new traffic distributions.

Concept and Model Drift

Network behavior changes over time due to configuration updates, topology changes, or seasonal trends, leading to model drift.

Without ongoing monitoring and retraining, predictive accuracy degrades quickly in production environments.

Explainability and Trust Issues

Many ML models, especially deep learning systems operate as “black boxes”. This makes it difficult for network engineers to understand or trust predictions, a concern also highlighted in guidance from NIST’s AI Risk Management Framework. This limits adoption in operational decision-making where clarity and accountability are important.

High Computational and Operational Cost

Training and deploying ML models at scale can be resource-intensive. Real-time inference, especially for deep models, requires significant compute and memory capacity, raising infrastructure costs and complexity.

Performance Constraints in Real-Time Networks

Networking systems have strict latency and reliability requirements. Integrating ML without impacting performance is challenging, particularly for time-sensitive decisions like congestion control or dynamic routing.

Integration and Organizational Challenges

Machine learning systems often require coordination across networking, data science, and operations teams. Misalignment in goals or workflows can hinder deployment and slow down iterations.

Security and Adversarial Risks

ML models themselves can be vulnerable to adversarial manipulation or exploitation, especially in security-critical networking applications.

Protecting models and predictions from malicious interference remains a significant concern. Once you see these challenges clearly, the next step is reducing risk before anything touches production.

At Webisoft, we deliver machine learning consulting customized to your network environment, keeping models reliable under real load and shifting traffic conditions.

How to Start Implementing Machine Learning in Networking

Implementing machine learning in networking begins with practical preparation and structured experimentation. It requires careful planning, data readiness, algorithm selection, and integration into monitoring and control workflows to deliver measurable operational value.

Implementing machine learning in networking begins with practical preparation and structured experimentation. It requires careful planning, data readiness, algorithm selection, and integration into monitoring and control workflows to deliver measurable operational value.

Define Clear Networking Objectives

Start by identifying specific networking problems where ML can add value, such as anomaly detection, traffic forecasting, or performance prediction.

Framing the problem clearly helps determine data needs, model types, and success metrics before development begins.

Prepare and Inventory Network Data

Gather and organize relevant network data sources such as telemetry streams, flow records, and performance logs. Ensure data quality, consistent timestamps, and normalization across devices; these are fundamental steps before feeding data into any ML process.

Select Initial Use Cases with Low Risk and High Value

Choose pilot projects where ML can be tested with minimal operational disruption, for example, short-term traffic forecasting or supervised classification of known patterns. Starting with controlled pilots reduces deployment complexity and accelerates learning.

Choose Suitable Machine Learning Techniques

Match algorithms to networking problems. For classification and detection tasks, consider models such as Random

Forests or clustering techniques; for time-based forecasting, time-series models like LSTM may be appropriate. Selecting the right model family early streamlines experimentation.

Develop and Validate Models

Build initial models using historical data and validate them against defined performance metrics. Use separate training and validation sets to ensure generalization. Continuously refine features and model parameters to improve accuracy.

Integrate with Monitoring and Operations Systems

Once models perform reliably in testing, integrate them into your observability stack or network monitoring tools. Start with passive monitoring of predictions before enabling automated responses. This phased approach builds confidence in model outputs.

Establish Feedback and Refinement Loops

Deploy mechanisms to collect feedback on model performance in real operational contexts. Track drift in input data or model output, and schedule periodic retraining to keep models relevant as network conditions evolve.

Scale Beyond Pilot Projects

After successful pilots, expand ML applications to broader areas of network operations. Use lessons learned to refine data pipelines, expand model catalogs, and optimize deployment practices.

How Webisoft Helps Organizations Apply ML in Networking

After the groundwork is complete, the focus shifts to building systems that perform consistently in production. With deep expertise in AI and network-driven architectures, Webisoft helps organizations implement machine learning with precision, stability, and long-term reliability.

After the groundwork is complete, the focus shifts to building systems that perform consistently in production. With deep expertise in AI and network-driven architectures, Webisoft helps organizations implement machine learning with precision, stability, and long-term reliability.

We Understand Your Networking Objectives First

Before any model is built, we analyze how your network actually operates. We examine traffic patterns, telemetry signals, routing behavior, performance baselines, and operational workflows to define what measurable improvement means in your specific environment.

- Network stability and outage reduction targets

- Performance and congestion thresholds

- Security visibility and anomaly detection goals

- SLA and uptime commitments

Every machine learning initiative begins with network clarity, not abstract experimentation.

We Build Networking-Specific Data Foundations

Machine learning in networking depends on structured, reliable telemetry. We design ingestion systems that unify flow records, device metrics, routing updates, and performance logs into consistent, model-ready inputs.

- Stream and batch processing of network telemetry

- Normalization across multi-vendor devices

- Time-synchronized feature engineering

- Scalable infrastructure for high-volume network data

Your telemetry becomes structured intelligence, not fragmented signals.

We Develop Models Aligned with Real Network Behavior

Networks behave differently under load, peak demand, failover events, and topology changes. We train and validate models using your historical traffic distributions and operational patterns so predictions reflect real conditions.

- Flow-level classification and behavioral modeling

- Network anomaly detection tuned to baseline patterns

- Capacity and congestion forecasting

- Topology-aware optimization models

The result is intelligence that mirrors how your infrastructure actually performs.

We Deploy Within Live Networking Environments Safely

Production networks cannot tolerate instability. We introduce machine learning through staged deployment strategies that protect uptime and operational continuity.

- Shadow-mode model evaluation

- Gradual activation within monitoring systems

- Integration with NMS, SIEM, and incident platforms

- Rollback safeguards to preserve network stability

Machine learning becomes part of your operations, not a risk to them.

We Continuously Adapt as Your Network Evolves

Traffic patterns shift. Workloads scale. Infrastructure expands. We monitor model accuracy against live telemetry and retrain when behavioral drift is detected.

- Ongoing model performance validation

- Drift detection tied to topology or workload changes

- Scheduled retraining using updated traffic data

- Performance reporting aligned with operational metrics

Your ML systems remain aligned with real network conditions over time.

We Deliver Long-Term Networking Intelligence

Our role extends beyond deployment. We support sustained optimization, iterative refinement, and architectural alignment so machine learning continues to deliver value across your networking lifecycle.

- Advisory support for expanding ML use cases

- Optimization of routing, monitoring, and control integration

- Continuous improvement aligned with network growth

Machine learning becomes a dependable layer of intelligence across your networking infrastructure. The difference between experimenting with machine learning and operationalizing it lies in execution discipline.

If your network is ready for that step, explore how Webisoft approaches production-grade ML implementation and start a focused discussion through our contact page.

Engineer Intelligent Networks That Think Ahead.

Design, deploy, and scale ML-driven networking with Webisoft experts!

Conclusion

In closing, network complexity is not slowing down. Machine learning in networking offers a practical path toward adaptive, predictive infrastructure capable of meeting modern performance and reliability expectations.

Bringing that shift into production requires more than algorithms; it requires architectural discipline. When your organization is ready to embed intelligence into live network systems, Webisoft is equipped to help you execute with confidence.

Frequently Asked Question

Can ML be used in wireless networks and 5G?

Yes. Machine learning is widely used in wireless and 5G networks to support dynamic spectrum allocation, traffic forecasting, and adaptive resource management. It helps optimize radio access networks by learning from real-time network conditions and user demand patterns.

Can ML work with encrypted traffic?

Yes. Even when payload data is encrypted, machine learning models can analyze flow-level metadata such as packet size, timing intervals, and session duration. These statistical patterns allow meaningful traffic classification and anomaly detection without decrypting content.

Does ML require labeled data for all networking tasks?

No. Some networking tasks rely on supervised models that require labeled examples, such as known attack types. However, unsupervised techniques can identify unusual patterns or deviations in network behavior without predefined labels.