Machine Learning in Medical Imaging: Uses & Challenges

- BLOG

- Artificial Intelligence

- March 5, 2026

Machine learning in medical imaging refers to algorithms that analyze medical scans such as X-rays, CT scans, and MRIs to support diagnosis. These systems learn patterns from large image datasets and then detect abnormalities, measure lesions, and classify disease. This capability offers faster interpretation and more consistent reporting across departments. Hospitals use it to reduce diagnostic variability, prioritize urgent cases, and extract quantitative insights from scans. However, real-world adoption also introduces challenges such as limited annotated data, model bias, regulatory approval requirements, and integration with existing systems. In this blog, we will explain how machine learning works inside imaging systems and where it delivers measurable value. We will also examine its benefits, limitations, and future direction in modern healthcare environments. The pipeline behind machine learning in medical imaging follows a clear sequence from raw scan to clinical decision. Each stage depends on the previous one, which means errors early in the process affect the final output:

The pipeline behind machine learning in medical imaging follows a clear sequence from raw scan to clinical decision. Each stage depends on the previous one, which means errors early in the process affect the final output: The real value of machine learning in medical imaging becomes clear when you examine real clinical use cases. Hospitals apply these systems to detect disease earlier, reduce diagnostic variability, and improve workflow efficiency. Each application connects image data to measurable clinical outcomes.

The real value of machine learning in medical imaging becomes clear when you examine real clinical use cases. Hospitals apply these systems to detect disease earlier, reduce diagnostic variability, and improve workflow efficiency. Each application connects image data to measurable clinical outcomes. The challenges of using machine learning in medical imaging affect accuracy, trust, and deployment. These issues go beyond model design and extend into data, regulation, and workflow. Understanding these barriers helps healthcare teams adopt AI responsibly.

The challenges of using machine learning in medical imaging affect accuracy, trust, and deployment. These issues go beyond model design and extend into data, regulation, and workflow. Understanding these barriers helps healthcare teams adopt AI responsibly. Machine learning in medical imaging demands more than model training. It requires production-grade engineering, secure architecture, and long-term system stability. Webisoft approaches these projects with a focus on reliability, compliance, and scalable deployment.

Machine learning in medical imaging demands more than model training. It requires production-grade engineering, secure architecture, and long-term system stability. Webisoft approaches these projects with a focus on reliability, compliance, and scalable deployment.

Contents

- 1 What Is Machine Learning in Medical Imaging?

- 2 How Machine Learning in Medical Imaging Works (End-to-End Pipeline)

- 3 Core Models Powering Imaging Systems

- 4 Application of Machine Learning in Medical Imaging

- 5 Benefits of Machine Learning in Medical Imaging

- 6 Build Smarter Medical Imaging Systems with Webisoft.

- 7 Challenges of Using Machine Learning in Medical Imaging

- 8 Future Trends of Machine Learning in Medical Imaging

- 9 How Webisoft Supports Medical Imaging AI Development

- 10 Build Smarter Medical Imaging Systems with Webisoft.

- 11 Conclusion

- 12 FAQs

- 12.1 1. How is machine learning used in medical imaging?

- 12.2 2. What algorithms are used in medical imaging?

- 12.3 3. Is AI replacing radiologists?

- 12.4 4. How accurate is AI in medical diagnosis?

- 12.5 5. What are the challenges of AI in healthcare imaging?

- 12.6 6. What is FDA approval for AI medical devices?

What Is Machine Learning in Medical Imaging?

Machine learning in medical imaging refers to algorithms that learn patterns from scans such as X-rays, CT scans, and MRIs to support diagnosis. These systems train on large labeled datasets so they can detect subtle changes in pixel intensity that signal disease. According to a report, deep learning models performed at a level comparable to healthcare professionals across multiple imaging tasks, which shows why this field has gained clinical attention. At its core, AI in medical imaging works through structured pattern recognition. Algorithms convert images into numerical data and then analyze texture, edges, and shape to classify abnormalities. For example, a study showed that a neural network identified pneumonia on chest X-rays with performance similar to practicing radiologists. [Source: Arxiv] This process relies heavily on medical image analysis, which extracts measurable features from scans. Models segment organs, outline tumors, and measure growth over time to support treatment decisions. According to the U.S. Food and Drug Administration, more than 500 AI-enabled medical devices had received clearance by 2023, and many focus on imaging-based diagnosis. Unlike older rule-based CAD tools, AI in radiology improves as it learns from new data. Traditional systems followed fixed rules and often produced high false-positive rates, while learning-based models adapt and reduce variability. This data-driven approach explains why imaging remains one of the strongest use cases for machine learning today.How Machine Learning in Medical Imaging Works (End-to-End Pipeline)

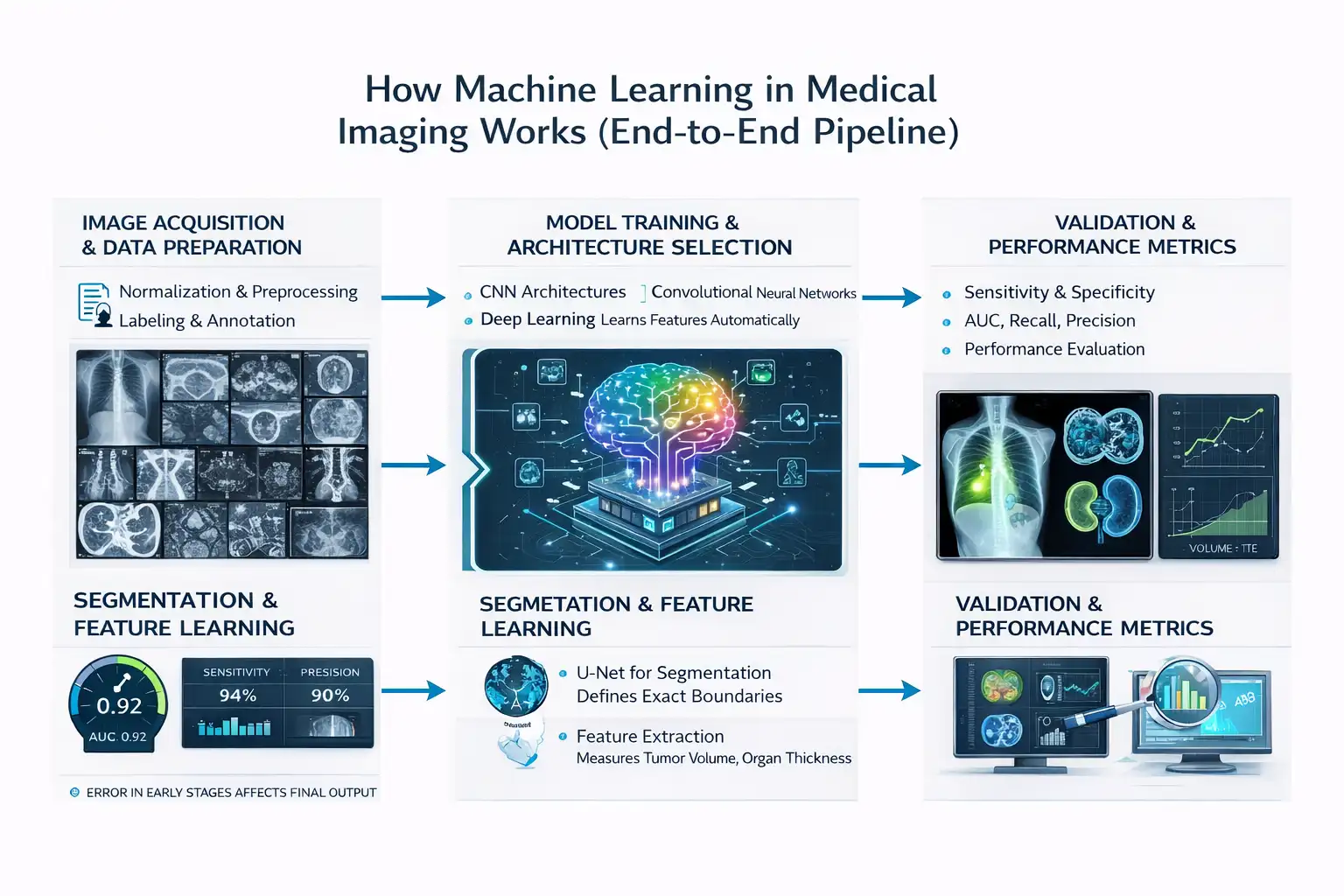

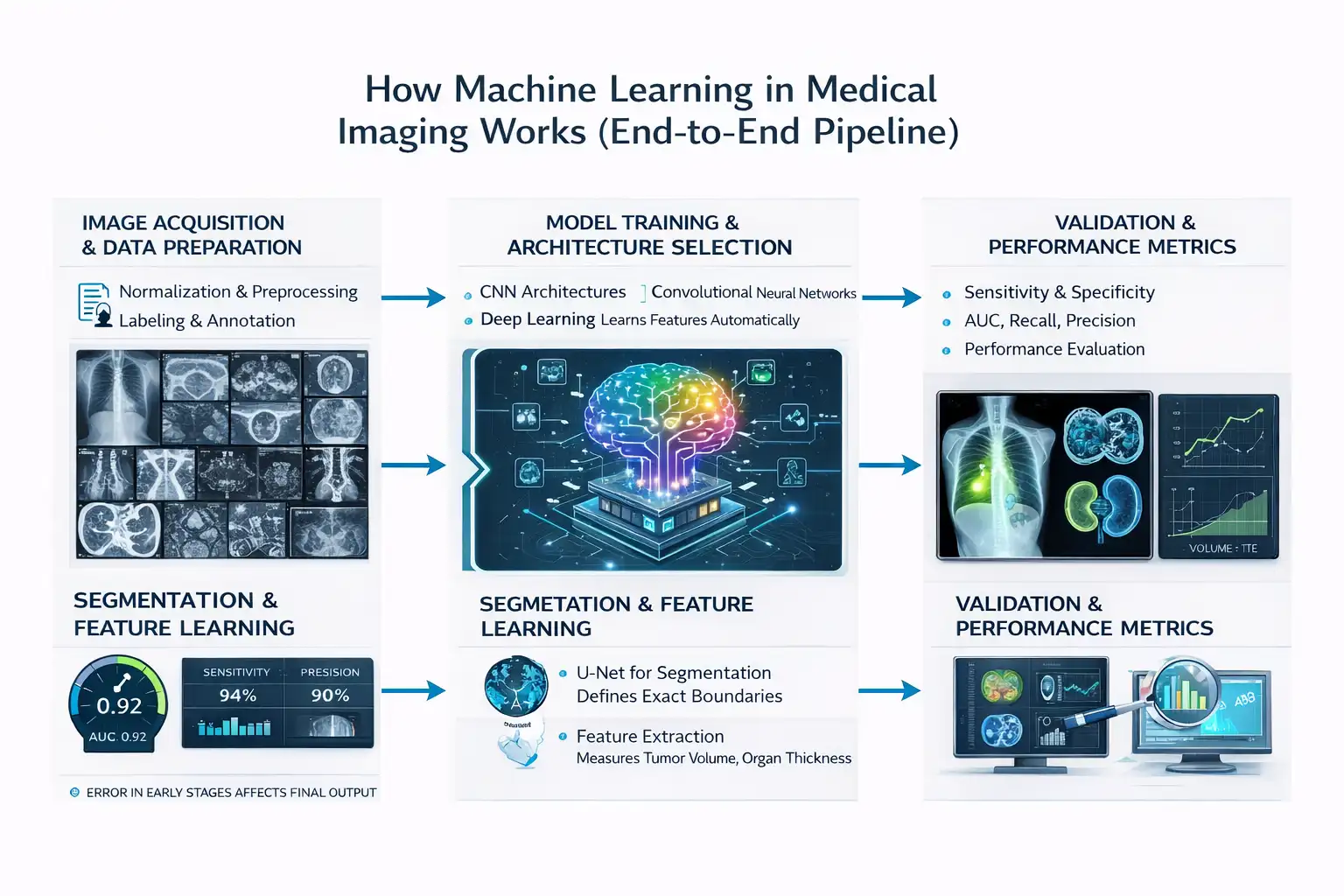

The pipeline behind machine learning in medical imaging follows a clear sequence from raw scan to clinical decision. Each stage depends on the previous one, which means errors early in the process affect the final output:

The pipeline behind machine learning in medical imaging follows a clear sequence from raw scan to clinical decision. Each stage depends on the previous one, which means errors early in the process affect the final output:Image Acquisition and Data Preparation

The first step collects images from modalities such as X-ray, CT, MRI, and ultrasound. Hospitals produce billions of imaging studies globally each year, which creates the raw material for training models. Public datasets such as fastMRI, released by NYU Langone Health, provide more than 1 million MRI images for research use. The next step prepares this data through normalization and preprocessing. Normalization aligns pixel intensity values so scans from different machines remain comparable. Without this adjustment, a model may misinterpret scanner variation as disease. However, this will not be possible with many images like MRI. Labeling then defines what the model must learn. Radiologists annotate tumors, fractures, and lesions directly on images, and this task demands high expertise and time.Model Training and Architecture Selection

The training phase selects architectures such as convolutional neural networks in medical imaging. These models scan images layer by layer to identify edges, shapes, and complex patterns linked to pathology. Studies have shown that CNN-based systems can reach performance levels comparable to specialists in controlled tasks. This stage relies on deep learning in medical imaging, where models learn features automatically from raw data. Unlike older rule-based systems, engineers do not manually define disease markers. Instead, the network improves accuracy as it processes larger labeled datasets.Segmentation and Feature Learning

Segmentation defines exact anatomical boundaries within a scan. Many researchers apply the U-Net for medical image segmentation because it performs well even with limited data. Even research shows that U-Net–based CNN variants achieve high accuracy in tumor segmentation and boundary detection tasks. Feature learning then extracts measurable values such as tumor volume or organ thickness. These structured outputs allow clinicians to track disease progression objectively. This measurable structure connects raw pixels to clinical decisions.Validation and Performance Metrics

Validation determines whether the model performs safely in real settings. Clinicians examine sensitivity and specificity in AI diagnosis to understand detection strength and error rates. High sensitivity reduces missed disease, while high specificity limits false positives in medical AI, which can otherwise trigger unnecessary follow-ups. Performance evaluation also includes AUC, recall, and precision. AUC measures how well the model separates positive from negative cases across thresholds. Recall captures how many true cases the model detects, and precision reflects how many predicted positives are correct, which helps manage dataset bias and class imbalance.Core Models Powering Imaging Systems

Modern imaging systems rely on deep learning architectures that extract patterns from pixel data. These models classify disease, segment organs, and detect anomalies in scans:CNN and 3D CNN Approaches

Convolutional neural networks remain the foundation of most imaging systems. CNNs scan images through filters that detect edges, textures, and shapes linked to disease. According to a report, CNN-based systems matched clinicians in diagnostic imaging performance across multiple specialties. 3D models extend this idea further through 3D CNN medical imaging. Instead of analyzing single slices, 3D CNNs process entire CT or MRI volumes, which helps capture spatial relationships between slices. Studies show that 3D CNN models improved lung nodule detection accuracy compared to 2D approaches in volumetric CT data.Vision Transformers in Imaging

Vision Transformers handle images differently by analyzing global relationships across the entire scan. Vision Transformer medical imaging models divide images into patches and use attention mechanisms to understand how regions relate to each other. Transformers perform best when large annotated datasets exist. However, they require more computational power and memory than CNNs. In practice, hospitals often combine CNN and transformer models to balance efficiency and performance.Application of Machine Learning in Medical Imaging

The real value of machine learning in medical imaging becomes clear when you examine real clinical use cases. Hospitals apply these systems to detect disease earlier, reduce diagnostic variability, and improve workflow efficiency. Each application connects image data to measurable clinical outcomes.

The real value of machine learning in medical imaging becomes clear when you examine real clinical use cases. Hospitals apply these systems to detect disease earlier, reduce diagnostic variability, and improve workflow efficiency. Each application connects image data to measurable clinical outcomes.Cancer Detection and Diagnosis

Cancer detection remains one of the most advanced use cases. AI in CT scan interpretation helps detect lung nodules by analyzing shape, density, and growth patterns across slices. This structured analysis improves early identification in screening programs. Breast cancer screening also relies on automated classification models. These systems analyze mammograms to flag suspicious masses, which supports faster second review by specialists. Earlier detection directly improves survival rates in high-risk populations.Brain Tumor Segmentation

Brain tumor segmentation depends heavily on AI in MRI analysis. Algorithms measure tumor volume and define exact boundaries within soft tissue structures. Clear segmentation supports accurate surgical planning and radiation targeting. Automated segmentation also reduces variation between radiologists. Consistent tumor measurement improves longitudinal monitoring during therapy.Cardiac Imaging and Risk Prediction

Cardiac imaging systems now support coronary risk analysis. Models analyze CT data to detect plaque buildup and arterial narrowing. This quantitative assessment improves cardiovascular risk stratification. These systems also contribute to predictive imaging analytics by combining scan findings with patient history. Doctors use these insights to identify patients who may require early intervention.Retinal Disease Screening

Retinal screening programs use automated analysis to detect diabetic retinopathy in primary care clinics. Portable fundus cameras capture images, and algorithms evaluate retinal damage within seconds. This setup increases access to screening in underserved regions. Automated systems also scale efficiently across large populations. That scalability supports preventive care at a national level.Fracture Detection in Emergency Care

Fracture detection systems assist busy emergency departments. Computer vision in healthcare enables models to detect subtle bone discontinuities in X-ray images. Faster identification improves triage during high patient inflow. These systems provide structured confidence scores. Clinicians review flagged regions before confirming diagnosis.Neurological Disorder Classification

Neurological imaging models detect structural brain changes linked to dementia and movement disorders. Algorithms analyze MRI patterns to identify early degeneration markers. Early pattern recognition supports proactive treatment planning. Across these use cases, execution matters as much as the model itself. At Webisoft, we design and deploy AI imaging systems end-to-end, from architecture planning to secure enterprise integration. Our team builds production-ready solutions that align with clinical workflows and real operational constraints.Benefits of Machine Learning in Medical Imaging

The benefits of machine learning in medical imaging go beyond automation. These systems improve accuracy, speed, and clinical consistency across imaging departments. Each advantage directly connects to measurable patient and operational outcomes.Enhanced Diagnostic Accuracy

Improved accuracy stands as the primary benefit. Algorithms trained for medical image analysis detect subtle pixel-level changes linked to tumors, fractures, and organ abnormalities. Consistency also improves across readers. Standardized model outputs reduce inter-observer variability, which often occurs between radiologists reviewing complex scans.Faster Interpretation and Triage

Faster interpretation reduces reporting delays. AI systems integrated into AI in radiology workflows flag high-risk cases before full review. This prioritization helps emergency teams respond faster to stroke, trauma, and pulmonary embolism cases. Speed also supports large screening programs. Automated analysis allows radiologists to focus on complex cases instead of routine scans.Early Disease Detection

Earlier detection improves long-term outcomes. Systems designed for AI in CT scan interpretation identify small lung nodules and vascular abnormalities at early stages. A 2021 meta-analysis reported that AI diagnostic systems achieved pooled sensitivity and specificity levels comparable to those of clinicians across multiple imaging specialties. Earlier diagnosis directly supports better survival rates. Timely intervention changes treatment pathways.Workflow Automation and Productivity

Workflow automation reduces repetitive manual tasks. Medical imaging workflow automation systems segment organs, measure lesions, and pre-fill structured reports. This automation lowers administrative burden and reduces cognitive fatigue during long shifts. Reduced fatigue supports more stable diagnostic performance. Consistent output improves department efficiency.Personalized and Predictive Care

Personalized care becomes more practical with structured imaging data. Predictive models support predictive imaging analytics by combining scan findings with clinical variables. A study reported that AI-based imaging biomarkers improved cardiovascular risk stratification compared to traditional scoring alone. This predictive capability shifts imaging from reactive diagnosis to proactive monitoring. Doctors can intervene before complications escalate.Improved Image Quality and Reconstruction

Image quality enhancement strengthens diagnostic confidence. Deep learning models improve low-dose CT reconstruction and reduce noise in MRI scans. Research demonstrated that AI-based reconstruction preserved diagnostic detail while lowering radiation exposure. Safer imaging practices benefit patients directly. Clearer images support more confident clinical decisions.Build Smarter Medical Imaging Systems with Webisoft.

Book a free consultation and explore secure, scalable AI solutions for clinical imaging workflows.

Challenges of Using Machine Learning in Medical Imaging

The challenges of using machine learning in medical imaging affect accuracy, trust, and deployment. These issues go beyond model design and extend into data, regulation, and workflow. Understanding these barriers helps healthcare teams adopt AI responsibly.

The challenges of using machine learning in medical imaging affect accuracy, trust, and deployment. These issues go beyond model design and extend into data, regulation, and workflow. Understanding these barriers helps healthcare teams adopt AI responsibly.Data Quality and Annotation Limits

Data quality stands as the first major challenge. Models need thousands of accurately labeled scans, and expert annotation takes significant time. Studies showed that inconsistent labeling across institutions directly reduces model reliability. Limited data diversity also affects performance. If datasets come from a narrow patient group, models struggle in broader clinical settings. This limitation increases diagnostic error when deployed widely.Generalizability and Domain Shift

Generalizability creates risk during real-world deployment. A model trained on one scanner type may perform poorly on another due to image variation. Differences in protocols, demographics, and hardware introduce domain shift that lowers accuracy. Hospitals often detect this issue only after implementation. Continuous monitoring becomes necessary to maintain stable performance.Interpretability and Clinical Trust

Interpretability affects clinical acceptance. Many deep learning systems cannot clearly explain why they flagged a region as abnormal. Without transparent reasoning, doctors hesitate to rely fully on predictions. Trust improves when systems provide visual heatmaps or confidence scores. However, explainability methods still require refinement.PACS and Workflow Integration

PACS and workflow integration determines operational success. PACS integration AI must connect directly with imaging storage systems without slowing radiology workflow. If integration disrupts reporting speed, clinical teams resist adoption. Seamless data exchange reduces friction. Efficient integration supports faster review and prioritization.Bias and Fairness Concerns

Bias emerges when training data lacks representation. If certain age groups or ethnic populations appear less frequently in datasets, prediction errors increase for those patients. Research highlighted that unmonitored bias in AI health technologies can worsen healthcare inequality by perpetuating existing disparities. [Source: The Lancet Digital Health] Developers must test fairness metrics before deployment. Balanced datasets reduce this structural risk.Regulatory and Compliance Barriers

Regulatory approval adds complexity. Authorities require validation studies, risk assessment, and post-market monitoring before clinical use. These safeguards protect patients but slow product rollout. Healthcare institutions must also maintain documentation and audit trails. Compliance demands ongoing oversight rather than one-time approval.Workflow Integration and Cost

Workflow integration challenges daily operations. Hospitals must connect AI tools to imaging systems without disrupting radiologists’ routines. Poor integration can increase workload instead of reducing it. Infrastructure cost also affects adoption. High-performance servers, cybersecurity controls, and maintenance teams require sustained investment.Future Trends of Machine Learning in Medical Imaging

The future of machine learning in medical imaging will focus on deeper clinical integration and smarter models. Hospitals now expect AI to move beyond detection and support full diagnostic reasoning. New research directions show clear movement toward scale, privacy, and predictive care.Foundation Models and Generative AI

Foundation models will change how imaging systems learn. Instead of training small models for each task, large pre-trained systems will adapt to multiple imaging problems. Generative systems will also expand training datasets. Synthetic image generation helps reduce data scarcity while maintaining privacy safeguards. This approach supports safer model development in regulated environments.Multimodal and Multimodal Diagnostic AI

Future systems will rely on multimodal medical imaging AI. These models combine MRI, CT, PET, and ultrasound data within one unified framework. Integrating multiple sources improves diagnostic context and reduces isolated decision errors. Multimodal diagnostic AI will also combine imaging with lab results and clinical history. This structured integration supports more precise treatment planning.Federated and Privacy-Preserving Learning

Federated learning will expand collaborative research. Hospitals can train models locally without transferring raw patient data. This privacy-focused approach aligns with regulatory expectations and reduces compliance risk. Broader collaboration improves dataset diversity. More diverse data improves generalizability across populations.Advanced Architectures and Vision Transformers

Transformer-based systems will become more common in Vision Transformer medical imaging applications. These architectures capture long-range spatial relationships better than traditional models. As computing resources improve, adoption will increase in large-scale hospital networks. Hybrid systems that combine convolution layers and attention mechanisms will balance efficiency and accuracy. This approach reduces hardware demands while improving contextual understanding.Edge AI and Real-Time Imaging

Edge deployment will allow real-time analysis during scanning. Imaging devices may soon integrate AI-driven image reconstruction to enhance clarity during acquisition. Real-time processing shortens diagnosis time in emergency settings. Embedded AI will also support automated quality control. This automation reduces rescans and improves patient throughput.Explainable and Quantitative AI

Explainable systems will remain a priority. Clinicians require transparent reasoning before trusting automated predictions. Visual explanation maps and structured confidence outputs will support clinical oversight. Quantitative analysis will expand beyond detection. Future tools will measure lesion size, tissue density, and structural progression in a standardized way. This shift turns imaging into a predictive and monitoring tool rather than a single-point diagnostic step. These trends show that machine learning in medical imaging will move toward scalable, privacy-aware, and context-driven intelligence.How Webisoft Supports Medical Imaging AI Development

Machine learning in medical imaging demands more than model training. It requires production-grade engineering, secure architecture, and long-term system stability. Webisoft approaches these projects with a focus on reliability, compliance, and scalable deployment.

Machine learning in medical imaging demands more than model training. It requires production-grade engineering, secure architecture, and long-term system stability. Webisoft approaches these projects with a focus on reliability, compliance, and scalable deployment.Machine Learning Expertise

Advanced ML pipelines are designed around real imaging workflows, not isolated prototypes. Data preprocessing, model optimization, and structured validation form the foundation of every build. Our expertise from AI-driven platforms such as ConQuerence AI and Maxa AI reflects the ability to convert complex machine learning systems into stable SaaS products. Performance benchmarks guide every release. Models must demonstrate repeatable accuracy and operational consistency before deployment.Healthcare Compliance and Security

Healthcare platforms must meet strict privacy and security requirements. Architectures are structured to support encrypted storage, controlled access, and secure data transfer. We have worked on enterprise systems like Genium 360 and Exmar illustrate experience in building secure, scalable digital infrastructures. Audit readiness is planned from the start. This reduces compliance friction during regulatory reviews.Engineering and Deployment Support

Successful AI solutions depend on clean system integration. Backend systems, frontend interfaces, and DevOps pipelines are aligned to ensure seamless deployment. Our xperience from platforms such as Proprio Direct, BidXpert, and Edigo demonstrates delivery of high-performance, production-ready environments. Post-deployment stability remains a priority. Version control, system monitoring, and infrastructure updates protect long-term reliability.Build Smarter Medical Imaging Systems with Webisoft.

Book a free consultation and explore secure, scalable AI solutions for clinical imaging workflows.