How to Build a Machine Learning Model Step by Step

- BLOG

- Uncategorized

- January 3, 2026

Building an effective AI system starts with understanding how machine learning models are designed and trained. Rather than relying on predefined rules, modern systems learn from data, identify patterns, and apply those patterns to make predictions, classifications, or decisions in real time.

Across real-world AI applications, this approach enables systems to forecast trends, recognize images, understand language, and automate decisions at scale. When engineered correctly, models improve through data and feedback, reducing manual effort while increasing accuracy and operational efficiency.

This blog explains how to build a machine learning model, how it learns, how different model types function, and how these systems are deployed reliably in real-world products.

Contents

- 1 What Is a Machine Learning Model in AI

- 2 How to Build a Machine Learning Model Step by Step

- 2.1 Step 1: Define the Problem and Objective

- 2.2 Step 2: Collect and Validate Data

- 2.3 Step 3: Clean and Preprocess the Data

- 2.4 Step 4: Perform Exploratory Data Analysis and Feature Engineering

- 2.5 Step 5: Split Data Into Training, Validation, and Testing Sets

- 2.6 Step 6: Select the Appropriate Machine Learning Algorithm

- 2.7 Step 7: Train the Machine Learning Model

- 2.8 Step 8: Evaluate Model Performance

- 2.9 Step 9: Optimize Through Hyperparameter Tuning

- 2.10 Step 10: Deploy the Model Into Production

- 2.11 Step 11: Monitor, Maintain, and Retrain

- 3 Machine Learning Algorithm vs Machine Learning Model

- 4 Types of Machine Learning Models Used in AI

- 5 How a Machine Learning Model Learns From Data

- 6 Build a production-ready machine learning model with Webisoft!

- 7 Challenges of Building a Machine Learning Model

- 7.1 Data Quality and Availability Constraints

- 7.2 Complex and Resource-Intensive Data Preprocessing

- 7.3 Infrastructure and Compute Limitations

- 7.4 Difficulty in Model and Algorithm Selection

- 7.5 Training, Tuning, and Generalization Risks

- 7.6 Integration With Existing Systems

- 7.7 Reproducibility, Explainability, and Governance

- 7.8 Monitoring, Drift, and Continuous Retraining

- 7.9 Operationalizing ML With CI/CD and MLOps

- 8 Real World AI Use Cases Powered by Machine Learning Models

- 9 How We Choose the Right Machine Learning Model

- 10 Build a production-ready machine learning model with Webisoft!

- 11 Conclusion

- 12 FAQs

What Is a Machine Learning Model in AI

A machine learning model is a trained computational system that learns patterns from data to make predictions, classifications, or decisions on new inputs. In AI applications, this model is created when a machine learning algorithm is trained on historical data and learns how different inputs relate to expected outcomes.

The goal is not to follow fixed instructions, but to generalize from examples. The machine learning model in AI systems functions as the core decision engine. Data flows into the model, internal parameters process that information, and the model produces an output such as a score, label, or prediction.

The surrounding AI software relies on this output to determine behavior during real time use. This behavior differs from rules-based logic. Traditional systems depend on predefined conditions written for every possible scenario.

An AI machine learning model replaces rigid rules with learned statistical relationships. When new data patterns appear, the model can be retrained rather than rewritten, which makes it suitable for complex and changing environments.

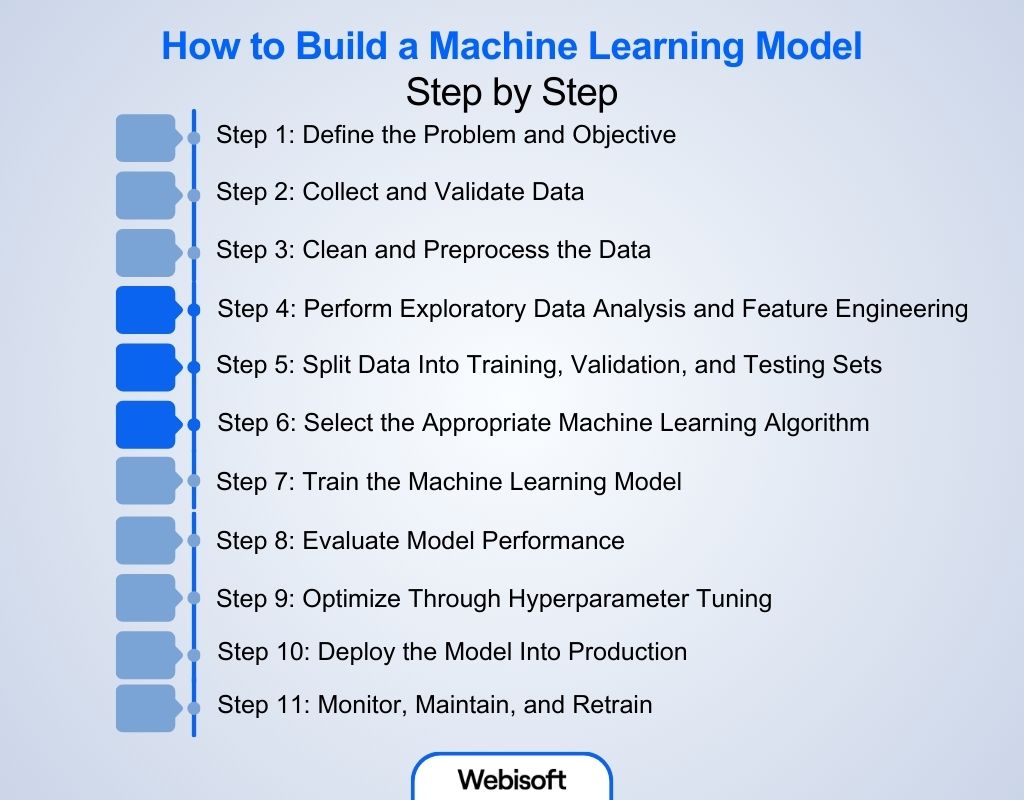

How to Build a Machine Learning Model Step by Step

Building a machine learning model is a structured engineering process, not a single training action. A production-ready machine learning model in AI moves through clearly defined stages, from problem definition to long-term monitoring.

Building a machine learning model is a structured engineering process, not a single training action. A production-ready machine learning model in AI moves through clearly defined stages, from problem definition to long-term monitoring.

Following a disciplined workflow reduces bias, improves accuracy, and ensures the AI machine learning model performs reliably in real-world conditions.

Step 1: Define the Problem and Objective

Every successful machine learning model starts with a clearly defined problem. This step establishes what the model must predict, classify, or optimize and why the outcome matters.

You must identify the business or research goal, determine whether the task involves regression, classification, clustering, or forecasting, and define the expected output format. Poorly defined objectives often lead to misaligned models, even when training accuracy appears high.

Step 2: Collect and Validate Data

Data forms the foundation of all machine learning models. Collect relevant, high-quality, and representative data from sources such as databases, sensors, APIs, surveys, or public datasets.

Data may be proprietary, sourced from existing datasets, combined from multiple sources, or synthetically generated. Early validation is critical to detect missing values, noise, imbalance, or systematic bias that can undermine the machine learning model in AI later.

Step 3: Clean and Preprocess the Data

Raw data cannot be used directly by a machine learning algorithm. This step ensures the dataset is accurate, consistent, and usable.

Common preprocessing tasks include removing duplicates, correcting incorrect entries, handling missing values, scaling numerical features, and encoding categorical variables. Proper preprocessing stabilizes training and reduces unintended bias in the final AI machine learning model.

Step 4: Perform Exploratory Data Analysis and Feature Engineering

Exploratory Data Analysis reveals patterns, correlations, anomalies, and distributions within the dataset. Visualizations and statistical summaries help guide model design decisions.

Feature engineering transforms raw inputs into representations that are easier for machine learning models to learn from. In many cases, effective feature engineering improves performance more than switching algorithms or increasing model complexity.

Step 5: Split Data Into Training, Validation, and Testing Sets

To evaluate generalization fairly, the dataset must be split into separate subsets. Training data teaches the model, validation data supports tuning, and testing data measures real-world performance.

Stratified splits are often used for classification to preserve class balance. This separation prevents overfitting and provides an honest assessment of the machine learning model.

Step 6: Select the Appropriate Machine Learning Algorithm

This step highlights the machine learning algorithm vs model distinction. The algorithm defines how learning occurs, while the trained model stores learned knowledge. Regression and classification problems typically use a supervised machine learning model.

Pattern discovery and anomaly detection rely on an unsupervised learning model. Large, unstructured datasets such as images or text may justify a deep learning model, though simpler approaches often outperform complex ones on smaller datasets.

Step 7: Train the Machine Learning Model

Training applies the selected machine learning algorithm to the prepared dataset. The model makes predictions, computes error using a loss function, and updates parameters iteratively to reduce that error.

Monitoring learning curves during training helps detect convergence issues, overfitting, or instability early in the process.

Step 8: Evaluate Model Performance

After training, the machine learning model is tested on unseen data to measure reliability. Metrics such as accuracy, precision, recall, F1-score, RMSE, MAE, or R² are selected based on the task.

Comparing training and testing results reveals underfitting, overfitting, or data leakage that must be addressed before deployment.

Step 9: Optimize Through Hyperparameter Tuning

Hyperparameters control model behavior but are not learned during training. Techniques such as grid search, random search, or Bayesian optimization help identify configurations that improve generalization without unnecessarily increasing complexity unnecessarily. Optimization is iterative and often requires revisiting earlier steps in the workflow.

Step 10: Deploy the Model Into Production

Deployment integrates the machine learning model in AI systems through APIs, services, or pipelines. The model must meet latency, scalability, and reliability constraints to function in real environments. Training and inference pipelines are architecturally separate. Only the trained model is executed in production.

Step 11: Monitor, Maintain, and Retrain

Real-world data changes over time. Monitoring detects model drift, bias, and performance degradation. Retraining with updated data ensures the AI machine learning model remains accurate and aligned with evolving conditions. Continuous maintenance transforms a trained model into a sustainable production system.

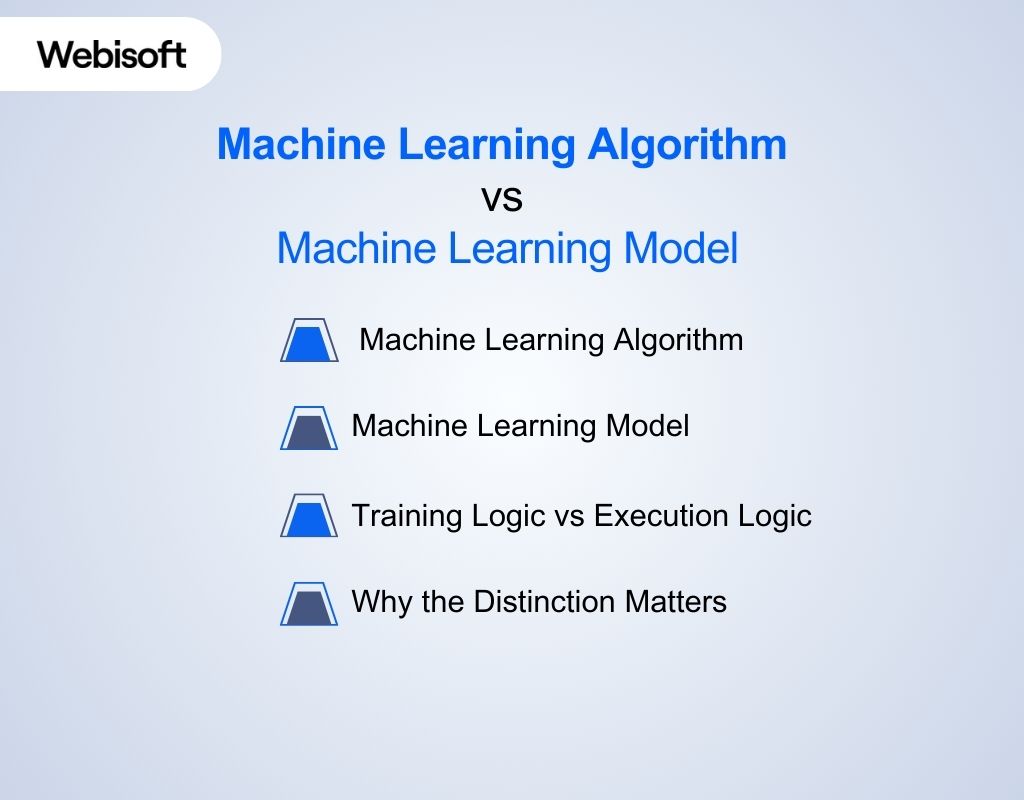

Machine Learning Algorithm vs Machine Learning Model

The difference between a machine learning model and an algorithm is a common source of confusion in AI, especially when teams are learning how to build a machine learning model correctly. While closely related, they serve different purposes in how intelligent systems learn and operate. One defines the learning process, while the other represents the learned result.

The difference between a machine learning model and an algorithm is a common source of confusion in AI, especially when teams are learning how to build a machine learning model correctly. While closely related, they serve different purposes in how intelligent systems learn and operate. One defines the learning process, while the other represents the learned result.

Machine Learning Algorithm

A machine learning algorithm is the learning mechanism, not the intelligence itself. It defines how a system transforms data into parameters by specifying an objective, an optimization strategy, and update rules. The algorithm governs how learning happens, not what is learned.

Critically, an algorithm has no memory of past training runs. It carries no domain knowledge. Gradient descent, backpropagation, or decision tree splitting logic behave identically regardless of whether they are applied to medical data, financial data, or images.

Their role ends once training converges. From an engineering standpoint, algorithms are training-time constructs. They shape models but never appear in production inference paths. Treating algorithms as deployed intelligence is a category error.

Machine Learning Model

A machine learning model is the learned artifact produced by training. It is a concrete, parameterized function that maps inputs to outputs using values derived from historical data. This is where knowledge actually resides and why understanding how to build a machine learning model goes beyond selecting an algorithm. Models persist.

They are serialized, versioned, deployed, monitored, and eventually replaced. A trained neural network, a fitted regression equation, or a learned decision tree all represent frozen learning outcomes. During inference, the model executes deterministically.

No learning occurs unless retraining is explicitly initiated. In real systems, the model is the unit of accountability. Accuracy, bias, latency, and drift all attach to the model, not the algorithm that produced it.

Training Logic vs Execution Logic

The cleanest way to resolve confusion is to separate learning from decision execution. Training is an optimization problem. Data flows through an algorithm, parameters are adjusted, and error is minimized. Inference is a computation problem.

Inputs flow through a fixed structure to produce outputs under strict performance constraints. Libraries often obscure this boundary by exposing training and inference through the same object. That convenience hides a fundamental architectural separation.

Conceptually, training pipelines and inference pipelines solve different problems and should be reasoned about independently.

Why the Distinction Matters

Confusing algorithms with models leads to poor system design decisions. It blurs responsibility when models fail, complicates deployment discussions, and weakens monitoring strategies. At a deeper level, machine learning is best understood as automatic program synthesis.

Algorithms are the procedures that write programs by tuning parameters. Models are the programs that result from that process and actually run in the system. Once this framing is internalized, the terminology stops being ambiguous.

Algorithms explain how learning occurs. Models embody what learning produced. That separation aligns with how real-world machine learning systems are built, deployed, and maintained.

Types of Machine Learning Models Used in AI

Machine learning models used in AI systems are best understood along two dimensions, especially when evaluating how to build a machine learning model for real-world use.

Machine learning models used in AI systems are best understood along two dimensions, especially when evaluating how to build a machine learning model for real-world use.

The first is how feedback is provided during learning. The second is what kind of decision the model is expected to make. Ignoring either dimension leads to shallow explanations and category confusion.

At a practical level, models exist to solve concrete tasks such as predicting numbers, assigning categories, grouping data, or optimizing decisions over time. The learning paradigm determines how those tasks are learned.

Supervised Learning Models

Supervised learning models operate when outcomes are known during training. The presence of labeled data allows the model to learn a direct mapping between inputs and targets. Within AI systems, supervised learning dominates because it aligns cleanly with business objectives and measurable performance.

Supervised models typically fall into regression and classification tasks. Regression models predict continuous numerical values, such as prices, demand levels, or risk scores.

Linear regression, polynomial regression, decision tree regression, random forest regression, and support vector regression all exist to model numerical relationships with varying complexity and bias-variance tradeoffs.

Classification models, by contrast, assign inputs to predefined categories. Logistic regression, support vector machines, decision trees, random forests, Naive Bayes, k-nearest neighbors, and gradient boosting variants like XGBoost or LightGBM are designed to separate data into classes based on learned decision boundaries.

In production AI systems, classification underpins spam detection, fraud identification, medical diagnosis, and content moderation.

Unsupervised Learning Models

Unsupervised learning models operate without labeled outcomes. Instead of predicting known answers, they discover structure within data.

These models are not primarily decision engines. They are pattern-discovery engines that AI systems use to understand data before acting on it. A major category here is clustering, where models group similar data points based on feature similarity. K-means, DBSCAN, and hierarchical clustering are commonly used for customer segmentation, behavior analysis, and exploratory discovery.

Another important category is dimensionality reduction, which compresses high-dimensional data while preserving essential structure. Techniques like PCA and LDA are often used to simplify inputs, reduce noise, or enable visualization before supervised learning is applied.

Unsupervised learning also includes anomaly detection, where the goal is to identify rare or abnormal patterns. Isolation Forests and Local Outlier Factor models are frequently embedded in AI systems for fraud detection, system monitoring, and security use cases.

Finally, association learning focuses on discovering relationships between items rather than grouping them. Algorithms like Apriori, FP-Growth, and Eclat uncover co-occurrence patterns that power recommendation engines and market basket analysis.

Semi-Supervised Learning Models

Semi-supervised learning models acknowledge a common real-world constraint: labeled data is scarce, expensive, or incomplete. These models use a small labeled dataset to guide learning across a much larger unlabeled dataset.

In practice, semi-supervised models often start with supervised signals and expand learning using clustering, representation learning, or synthetic label generation.

This approach is common in domains like medical imaging, natural language processing, and speech recognition, where manual labeling does not scale but unlabeled data is abundant.

Rather than replacing supervised learning, semi-supervised learning extends it. It enables AI systems to improve generalization without incurring proportional increases in labeling costs.

Reinforcement Learning Models

Reinforcement learning models are fundamentally different from dataset-driven approaches. They learn through interaction, not examples. Instead of labels, the model receives rewards or penalties based on its actions and learns strategies that maximize long-term outcomes.

Within reinforcement learning, value-based methods such as Q-learning and Deep Q-Networks estimate the expected reward of actions. Policy-based methods like policy gradients and PPO learn action-selection strategies directly. Actor-critic methods combine both approaches to stabilize learning.

In more advanced systems, model-based reinforcement learning learns an internal representation of the environment to simulate future outcomes, with techniques like Monte Carlo Tree Search enabling planning.

These models are used when decisions affect future states, such as robotics, game playing, dynamic pricing, and autonomous navigation, and they significantly influence how to build a machine learning model for interactive systems.

Deep Learning Models Across Paradigms

Deep learning is not a separate learning paradigm. It is a modeling approach that can be applied across supervised, unsupervised, semi-supervised, and reinforcement learning.

Artificial neural networks, convolutional neural networks, recurrent architectures like LSTMs and GRUs, sequence-to-sequence models, autoencoders, transformers, and GANs all serve different representational needs.

They excel when data is large, unstructured, and high-dimensional, such as images, text, audio, and video. In modern AI systems, deep learning models often act as the representational backbone, while the learning paradigm determines how those representations are trained and used.

How a Machine Learning Model Learns From Data

A machine learning model learns by observing data, making predictions, measuring mistakes, and adjusting itself to improve accuracy. This learning process allows the model to generalize patterns and apply them to new, unseen inputs inside AI systems.

A machine learning model learns by observing data, making predictions, measuring mistakes, and adjusting itself to improve accuracy. This learning process allows the model to generalize patterns and apply them to new, unseen inputs inside AI systems.

Model Parameters Explained Simply

Model parameters are internal values that control how a machine learning model makes decisions. These parameters might represent weights, thresholds, or coefficients depending on the model type. During learning, the model adjusts these values to better match patterns found in data.

You can think of parameters as dials inside the model. Each adjustment slightly changes how inputs are interpreted. Over time, these small changes allow the model to improve its predictions without changing its structure.

Role of Training Data

Training data provides the examples a model learns from. In a supervised machine learning model, the data includes both inputs and correct outputs, which helps the model learn direct input to output relationships.

In contrast, an unsupervised learning model receives only inputs and must identify structure or similarity on its own. The quality and relevance of training data directly affect learning. If the data is biased, incomplete, or outdated, the model will learn flawed patterns. This is why data preparation plays a critical role in model performance.

Loss Function and Optimization Logic

Learning happens by measuring error and reducing it. A loss function calculates how far the model’s predictions are from the correct outcome. This value guides learning by showing how well the model is performing. Optimization methods then adjust parameters to reduce this error.

Over many iterations, the model improves by making smaller and more precise corrections. This process applies across learning styles, including reinforcement scenarios where a deep learning model may adjust behavior based on rewards or penalties rather than labeled outcomes.

Through repeated feedback and adjustment, the model gradually learns patterns that allow accurate predictions on new data.

Build a production-ready machine learning model with Webisoft!

Book a free consultation to design, train, and deploy machine learning models for real AI products.

Challenges of Building a Machine Learning Model

Understanding how to build a machine learning model also means understanding why many ML initiatives fail after early experimentation. These challenges span data, infrastructure, modeling decisions, deployment, and long-term maintenance of the AI machine learning model.

Understanding how to build a machine learning model also means understanding why many ML initiatives fail after early experimentation. These challenges span data, infrastructure, modeling decisions, deployment, and long-term maintenance of the AI machine learning model.

Data Quality and Availability Constraints

A machine learning model is only as reliable as the data used to train and run it. Poor data quality leads directly to inaccurate, unstable, or discriminatory outcomes.

Common issues include missing values, inconsistent formats, bias, imbalance, and unverifiable data provenance. Teams must ensure sufficient data volume, validate sources, and confirm that training data accurately represents real-world conditions the machine learning models will encounter.

Complex and Resource-Intensive Data Preprocessing

Raw data is rarely usable without significant preprocessing. Cleaning, normalization, encoding, and feature extraction require deep domain knowledge and consume substantial time and computing resources.

In many projects, preprocessing becomes more expensive than training the machine learning algorithm itself. Errors at this stage propagate silently and degrade the final machine learning model in AI.

Infrastructure and Compute Limitations

Building and training modern machine learning models, especially a deep learning model, demands scalable storage, high-throughput networking, and specialized compute such as GPUs.

Cloud platforms provide elasticity but introduce cost management challenges as training workloads scale. Poor infrastructure planning often results in stalled experimentation or runaway operational expenses.

Difficulty in Model and Algorithm Selection

Selecting the right approach is rarely straightforward. There are dozens of algorithms and architectures, each with different tradeoffs. Misunderstanding the machine learning algorithm vs model distinction leads teams to overcomplicate solutions or deploy unsuitable architectures.

Choosing between a supervised machine learning model, an unsupervised learning model, or a hybrid approach often requires iterative experimentation and experienced ML architects.

Training, Tuning, and Generalization Risks

Training introduces multiple risks. Overfitting causes models to memorize training data and fail in production. Underfitting results in overly simplistic models that miss critical patterns. Hyperparameter tuning is time-consuming and computationally expensive, yet necessary to balance performance and generalization in machine learning models.

Integration With Existing Systems

A deployed AI machine learning model must integrate with data pipelines, applications, hardware systems, and enterprise software. Integration failures often arise from mismatched data schemas, latency constraints, or incompatibilities with legacy systems. Successful integration requires coordination between ML engineers, software developers, and IT infrastructure teams.

Reproducibility, Explainability, and Governance

As machine learning model in AI systems influence critical decisions, organizations must ensure reproducibility and explainability.

Models should produce consistent outputs under identical conditions and allow traceability for audits, compliance, and debugging. Lack of explainability undermines trust, especially in regulated industries, and complicates ethical and legal accountability.

Monitoring, Drift, and Continuous Retraining

Building a machine learning model is not a one-time effort. Data distributions evolve, user behavior changes, and external conditions shift.

Without monitoring, model drift leads to silent performance degradation. Selecting meaningful metrics, defining KPIs, and operationalizing retraining pipelines remain persistent challenges for teams managing production machine learning models.

Operationalizing ML With CI/CD and MLOps

Traditional DevOps practices do not fully address ML-specific needs. ML workflows require versioned data, reproducible environments, automated testing, secure pipelines, deployment automation, performance monitoring, and continuous training.

Integrating CI/CD and MLOps practices is essential to scale how to build a machine learning model beyond experimentation into reliable production systems.

Real World AI Use Cases Powered by Machine Learning Models

A machine learning model enables AI systems to analyze large volumes of data, recognize patterns, and make decisions automatically. These models operate across industries, supporting tasks that would be inefficient or impossible with rule-based software.

A machine learning model enables AI systems to analyze large volumes of data, recognize patterns, and make decisions automatically. These models operate across industries, supporting tasks that would be inefficient or impossible with rule-based software.

Recommendation Systems

Recommendation systems use a supervised machine learning model to learn from user behavior such as clicks, views, purchases, and watch history. Platforms like Netflix and Amazon rely on these models to suggest movies, shows, or products tailored to each user, demonstrating how to build a machine learning model that adapts continuously.

Beyond entertainment and retail, recommendation logic is also used in content feeds, online learning platforms, and social media suggestions such as “people you may know.” The model improves relevance over time as it learns from new interactions.

Fraud Detection

Fraud detection systems commonly rely on an unsupervised learning model to identify abnormal patterns in financial transactions. Instead of matching fixed fraud rules, the model learns what normal behavior looks like and flags deviations in real time.

Banks and payment platforms use these models to detect suspicious card activity, prevent phishing attempts, and monitor account takeovers. This approach is critical because fraud patterns evolve faster than manual rules.

Computer Vision

Computer vision applications are powered by a deep learning model trained on large image and video datasets. These models allow AI systems to recognize objects, faces, and visual patterns with high accuracy.

Real-world uses include medical image analysis for disease diagnosis, facial recognition in devices, traffic monitoring, autonomous vehicles, and quality inspection in manufacturing. The model replaces manual visual inspection with scalable automation.

Natural Language Processing

Natural language processing relies on a machine learning model in AI to interpret text and speech. These models analyze language structure, context, and intent to understand or generate human-like responses.

Common examples include virtual assistants such as Siri and Alexa, email spam filtering, customer support chatbots, sentiment analysis, and cybersecurity tools that detect malicious messages. NLP models allow AI systems to interact with users through language rather than commands.

How We Choose the Right Machine Learning Model

At Webisoft, we approach machine learning model selection as a strategic decision, not a technical shortcut, especially when guiding teams on how to build a machine learning model that performs reliably in production.

At Webisoft, we approach machine learning model selection as a strategic decision, not a technical shortcut, especially when guiding teams on how to build a machine learning model that performs reliably in production.

We focus on aligning the machine learning model with business objectives, data realities, and long-term product reliability. Our methodology is designed to deliver AI systems that perform consistently in real-world conditions.

Define the Problem

We begin by clearly defining the problem the AI solution must address. This includes understanding the operational context, expected outcomes, and measurable success criteria.

Whether the use case involves fraud detection, forecasting, classification, or personalization, we ensure the machine learning model in AI is purpose-driven rather than exploratory.

Assess Data Readiness and Constraints

80% of machine learning models fail to reach production, mainly due to data quality, deployment complexity, and lack of monitoring. Data quality directly determines model viability.

We evaluate data volume, structure, labeling availability, and update frequency before making any modeling decisions. This assessment helps us determine whether a simpler approach is sufficient or whether more advanced techniques are justified.

Align Model Type With the Problem

Once the problem and data are understood, we map them to the appropriate learning approach. Predictive tasks with labeled outcomes typically use supervised methods. Pattern discovery problems benefit from unsupervised techniques. Interactive systems may require reinforcement-based learning.

This alignment clarifies the machine learning algorithm vs model distinction early and ensures the learning approach matches the operational need.

Performance, Interpretability, and Operational Cost

Model accuracy is important, but it is not the only factor we consider. In regulated or high-risk environments, we prioritize interpretability so decisions can be reviewed and justified.

In performance-sensitive systems, we account for latency, scalability, and infrastructure cost. Our goal is to select a model that performs reliably in production, not just in controlled experiments.

Prototyping and Comparative Testing

We establish a baseline model and then evaluate multiple candidates against real data. Performance is measured using relevant metrics, with a strong focus on generalization to unseen inputs.

Only models that demonstrate stability and consistency progress further. This iterative evaluation reduces overfitting risk and supports long-term maintainability.

Deployment and Continuous Improvement

Before finalizing a model, we ensure it integrates seamlessly with existing systems and infrastructure. Monitoring, retraining, and lifecycle management are planned from the start to support evolving data and business needs.

This disciplined approach ensures the selected machine learning model remains effective, scalable, and aligned with long-term objectives.

Build a production-ready machine learning model with Webisoft!

Book a free consultation to design, train, and deploy machine learning models for real AI products.

Conclusion

As AI adoption grows across industries, knowing how to build a machine learning model correctly has become essential for delivering reliable, scalable, and impactful AI systems. Success depends on following a disciplined process that spans problem definition, data preparation, model selection, deployment, and continuous monitoring.

If you are looking to build or optimize an AI solution, Webisoft helps you design, train, and deploy machine learning models. Contact us to build models that perform reliably in real-world products.

FAQs

1. What is a machine learning model?

A machine learning model is a computational system that learns patterns from historical data and applies them to new inputs. It uses learned parameters to make predictions, classifications, or decisions. The model improves as it is exposed to more relevant data.

2. How does a machine learning model work?

A model processes input data, applies learned mathematical relationships, and produces an output. During training, it compares predictions with actual results and adjusts internal parameters. After training, it uses this learned logic to handle unseen data.

3. Is deep learning a machine learning model?

Deep learning is a subset of machine learning, not a single model. It uses neural network-based models with multiple layers to learn complex patterns. These models are especially effective for images, text, audio, and large datasets.

4. What is the difference between AI and ML models?

Artificial intelligence is the broader concept of machines performing intelligent tasks. Machine learning models are specific systems within AI that learn from data instead of relying on fixed rules. Not all AI systems use machine learning.

5. How are ML models trained?

ML models are trained by feeding them data and comparing their predictions with expected outcomes. A loss function measures errors, and optimization methods adjust model parameters. This process repeats until the model reaches acceptable performance levels.